Preface Enterprises today find themselves caught in an uncomfortable contradiction. They want to operate with the speed, intelligence, and adaptability that modern AI makes possible, yet they are attempting to graft those ambitions onto architectures that were never designed to support them. For two decades, organizations invested heavily in data lakes, warehouses, ETL pipelines, BI layers, governance frameworks, and analytics platforms that were perfectly suited to producing dashboards and compliance reports. They were systems built to interpret the past, not anticipate the future. They were built for a world where people were the primary agents of decision-making, not machines; where insight arrived in monthly cycles, not continuously; where processes were adjusted quarterly, not in real time. The rise of generative AI exposed this architectural mismatch with brutal clarity. Suddenly, enterprises wanted to interrogate their business in natural language, execute decisions through autonomous agents, trigger workflows based on patterns emerging in operational data, and integrate models that needed unified context rather than siloed tables. But the architecture underneath could not support this. It was too slow, too brittle, too human-dependent, too fragmented across organizational boundaries. The enterprise had built highly refined versions of systems that were fundamentally improper for the AI era. The failure was not one of imagination. Enterprises understood the power of AI. Nor was it a failure of talent: teams of data scientists, engineers, and analysts worked heroically to adapt legacy systems to new needs. It was a failure of architecture itself. The existing systems were designed for a different century. This is why a new architectural pattern has begun emerging among the organizations truly succeeding with AI at scale. Whether implemented with C3 AI, Palantir Foundry and AIP, IBM’s watsonx ecosystem, NVIDIA’s AI Enterprise stack, or hyperscale services from AWS, Azure, and Google Cloud, the same structure appears again and again. It is a unified substrate, a single fabric where data, semantics, reasoning, action, and human interaction coexist seamlessly. It is the AI Factory – not a single product but a pattern, not a metaphor but a system, not a dashboard but an operating model. And like all true operating models – ERP, cloud computing, DevOps – the AI Factory does not enhance the old enterprise. It replaces it with something new.

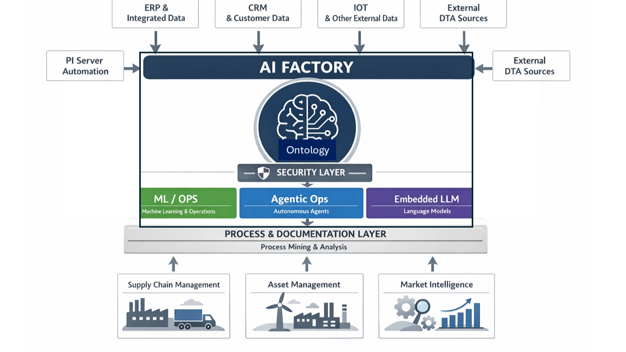

Chapter 1 The first transformation the AI Factory brings is the shift from AI as a collection of isolated projects to AI as a scalable production system. In most enterprises today, AI exists as a scatter of disconnected initiatives. A supply chain team runs a pilot. A finance group builds a forecasting proof of concept. A manufacturing site deploys a predictive maintenance model. A customer service group experiments with generative assistants. Each pilot has its own pipelines, its own data extracts, its own logic, its own dashboards, and its own attempt to reconcile inconsistent data. What emerges is not innovation but entropy. Each new project increases the organization’s architectural burden. The company may appear active – dozens of pilots, a growing AI portfolio – but every pilot is an island. There is no shared semantic layer. No reusable structures. No compounding effect. Nothing built in one project meaningfully reduces the cost or time required for the next. Everything must be reinvented. This is not scale. It is artisanal AI disguised as transformation. The AI Factory ends this cycle by creating a shared substrate beneath all AI efforts. Integrations made once become reusable across all future use cases. Semantic definitions established once – whether describing a turbine, a loan, a shipment, or a patient encounter – become canonical. Workflows developed for one process become templates for others. Agentic behaviors developed for one domain inform similar behaviors across adjacent domains. The enterprise begins to gain leverage. Work invested in one place accelerates value everywhere else. The shift from project mode to production mode is not a tooling decision; it is an architectural one. It requires the organization to adopt virtualization instead of pipelines, semantics instead of field mappings, agentic execution instead of brittle automations, adaptive workflows instead of rigid routings, and unified interfaces instead of fragmented screens. Only when this architecture is in place does AI stop being a series of experiments and become a foundational capability. The core of that foundation – the part that makes everything else possible – is the virtualization of data and the unification of its meaning.

Chapter 2 Data virtualization may appear at first glance to be a technical refinement, something modest and incremental, but in reality, it is one of the most profound architectural shifts an enterprise can make. Traditional data strategies rely on movement. Data is extracted from operational systems, loaded into lakes or warehouses, transformed into analytical structures, reshaped into semantic layers, aggregated into marts, and then pushed into dashboards or external tools. Every movement introduces variation. Every transformation introduces risk. Every team that touches the data interprets it slightly differently. Meaning becomes fractured across countless layers of representation. In such a landscape, it is unsurprising that enterprises struggle to build intelligence. When the meaning of data is unstable, no model can be stable either. When data is scattered across formats and contexts, no AI system can perceive the business coherently. What one system calls an order; another calls a transaction. What one team recognizes as churn, another interprets as category migration. Humans adapt to this ambiguity by developing instincts and local knowledge, but machines have no such survival mechanisms. They need structure. They need consistency. They need meaning. C3 and Palantir understood this reality before most of the industry did. They recognized that the traditional approach to data – collecting, moving, reshaping, remapping – is fundamentally incompatible with any ambition of intelligence at enterprise scale. Intelligence requires unity of meaning, not unity of storage. It requires data to be interpreted consistently across all contexts, not simply held in a central location. So instead of building ever more refined ways to consolidate data, they reimagined what a data layer could be. In C3’s architecture, data is not moved into the platform. It is virtualized. Systems remain in place, but their information is made directly accessible through a model-driven layer that interprets their structures, harmonizes their schemas, and exposes their meaning through a set of semantic types. These types are not tables. They are conceptual entities that mirror the real world. They describe what a turbine is, what an invoice represents, how an order behaves, how a customer relates to transactions, what temporal relationships exist between events. They unify meaning. They turn scattered representations into a coherent ontology of the business, one that is consistent no matter where the data physically resides. Palantir’s approach differs in execution but not in principle. In the Ontology, objects become the central abstraction of enterprise intelligence. A shipment is not a spreadsheet row or a JSON record; it is an entity with relationships. It is connected to the order it fulfills, the customer expecting it, the route it travels, the temperature logs monitoring its condition, the carrier moving it, the SLA defining its constraints, and the risks that may affect its outcome. The Ontology does not store the data. It contextualizes it. It becomes the living map of the enterprise’s reality. Every model, every agent, every workflow, every application can reason through this map with clarity. IBM approaches this challenge through governance. For IBM, the central failure of most enterprise data is not simply fragmentation but the lack of formal accountability around meaning. Watsonx ensures that data carries with it definitions, lineage, policies, and compliance frameworks. While less prescriptive about the semantic abstraction itself, IBM ensures that meaning is controlled, consistent, and legally defensible. The result of virtualization plus semantics is that the enterprise finally acquires something like a cognitive system. It gains the ability to understand itself in real time. Instead of pipelines constantly shuffling data through an obstacle course of transformations, information flows in its native form, interpreted by a semantic layer that preserves its identity. Intelligence becomes possible not because the data has been centralized, but because its meaning has been unified. This unification produces a remarkable effect. Teams that once spent months reconciling fields and definitions suddenly find themselves speaking the same conceptual language. Models built in one domain begin to generalize into another. Dashboards shrink in number because interpretation becomes inherent in the architecture rather than duplicated across tools. The enterprise becomes lighter, more flexible, more aware. Through virtualization and semantic modeling, the enterprise develops a brain – not a metaphorical one, but a structural one. And once the enterprise has a brain, it becomes possible to give it a voice.

Chapter 3 Large language models burst into the world with astonishing fluency. For the first time, machines could communicate with humans in natural language, explain complex subjects, draft compelling text, summarize dense documents, and interpret intent with uncanny smoothness. But as enterprises began testing these models within their own environments, they ran headfirst into a sobering realization: a public LLM, no matter how brilliant, knows nothing about their business. It does not understand the structure of their systems, the nuance of their data, the meaning of their objects, or the rules governing their operations. It does not recognize which information is sensitive, which relationships matter, which policies apply, or which actions are permissible. It is fluent without being knowledgeable, confident without being grounded, persuasive without being reliable. In an enterprise context, this gap is not an inconvenience. It is a risk. A model that hallucinates a number can misstate financial projections. A model that lacks permission awareness can leak sensitive information. A model unaware of contractual obligations can propose illegal actions. A model that improvises from incomplete context can generate explanations that sound authoritative but are factually wrong. The enterprise needs something more disciplined: not a storyteller, but an interpreter; not a generator of possibilities, but a reader of context; not a free-ranging agent, but a grounded participant in a complex, rule-bound environment. This is where the AI Factory reshapes what an LLM becomes. Instead of sitting outside the architecture, bolted into applications through ad-hoc prompting, the LLM is placed inside the semantic fabric. It is not asked to invent meaning; it is asked to express meaning that already exists. It becomes a lens rather than an author, a reasoning layer rather than a source of unfiltered output. C3 embodies this approach through its model-driven architecture. When a user issues a natural language question, the system does not simply forward the prompt to an LLM. It first interprets the request using the semantic model. If someone asks, “What caused the decline in output at Plant 14 yesterday?” the platform resolves “output,” “Plant 14,” “yesterday,” and “decline” into concrete semantic entities and time filters. It retrieves relevant signals from virtualized systems, applies permissions, filters out data the user cannot access, and constructs a factual context package. Only then does it invoke the LLM. The LLM reads structured truth, not raw data. It explains, interprets, and contextualizes, but it does not fabricate. It behaves like a bilingual interpreter translating between the language of business and the language of humans. Palantir’s AIP performs a similar transformation but extends it into operational action. The LLM is connected to the Ontology, which means every question, every request, every suggestion is expressed through objects the enterprise understands. When asked, “Why are shipments to the Northeast delayed?” the LLM navigates the Ontology’s objects: shipments, orders, routes, carriers, events, temperatures, exceptions. It interprets relationships and retrieves truth, not guesses. If asked to propose corrective actions, it generates recommendations grounded in actual constraints and profiles. It can even execute those actions through tool calling, but every action is permission-checked, logged, and auditable. The LLM becomes not a chatbot but a junior analyst with a perfect memory of the enterprise’s semantics and a strict adherence to rules. IBM brings a governance-first lens. Watsonx ensures that every prompt, every model invocation, and every output has lineage and accountability. In industries where a single incorrect decision can create regulatory exposure, explainability is not optional. IBM ensures that the LLM’s behavior is transparent, contextual, and compliant. This transforms the LLM from a risk to an asset, making it safe for functions like credit underwriting, medical decision support, or financial forecasting. NVIDIA enables the entire grounding process to happen at the speed required for real-time work. Through NIM microservices, optimized inference pipelines, and the NeMo framework, NVIDIA ensures that grounding, retrieval, tool-calling, and reasoning can be executed in milliseconds. Without this acceleration, the LLM would be too slow to embed deeply into operational flows. The AI Factory would become sluggish and unreliable. Instead, NVIDIA’s optimizations turn grounded LLMs into fast, interactive participants in the enterprise. Together, these layers transform the function of the LLM. It ceases to be a precarious oracle and becomes an institutional intelligence instrument. It becomes capable of reading fifty documents and explaining their differences in a single breath; of summarizing a week of operational logs into a page of insight; of answering questions that previously required multiple analysts and hours of data retrieval; of expressing complex semantic relationships in the language of executives, engineers, operators, and customers alike. Most importantly, it no longer works in isolation. It works alongside agents, workflows, systems, and humans. It is part of the enterprise’s cognitive loop. It senses, it interprets, it articulates, and it participates. With virtualization giving the enterprise a brain and grounded LLMs giving it a voice, the next question is how the enterprise acts. Interpretation alone is not enough. Every insight must eventually lead to decision, and every decision must lead to execution. This is where agents enter – not as scripts, not as bots, but as the enterprise’s emerging hands and feet.

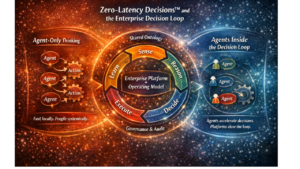

Chapter 4 Agents represent one of the most profound shifts in enterprise architecture since the advent of workflow systems and ERPs. Until recently, automation inside organizations was defined by brittle scripts, rigid rules engines, and procedural bots that mimicked human actions without understanding them. These systems performed tasks, not reasoning. They executed commands, not judgments. They followed predefined paths and crumbled the moment the world deviated from what the designer anticipated. Every exception became a failure mode. Every adjustment required a redesign. Every expansion meant more complexity piling onto an already fragile foundation. Enterprises tolerated this for years because they had no alternative. Automation was limited to the most stable, repetitive processes, and everything else required human mediation. Humans became the ultimate integrators, the ones who noticed contextual clues, who interpreted meaning, who identified anomalies, who escalated decisions, and who routed information where it needed to go. Even in highly digitized organizations, people spent their days stitching the business together, not advancing it. AI promised to change this, but the early generations of machine learning did not understand the enterprise deeply enough to take on meaningful responsibility. Agents inside an AI Factory are nothing like these earlier tools. They do not imitate keystrokes or replay pre-recorded behavior. They operate with context. They reason. They understand relationships. They evaluate trade-offs. They operate within the conceptual framework of the enterprise’s semantic layer. They do not just act on data; they understand what the data represents. They behave less like scripts and more like junior analysts, planners, or operators-except they work continuously, at machine speed, across vast streams of information no human could ever keep up with. In a C3-powered environment, an agent monitoring equipment health is not simply listening for a vibration threshold. It knows what asset is being observed, how critical it is, what production schedule depends on it, what inventories are impacted by changes in output, what maintenance history suggests about its failure modes, what technicians are available, and what actions would minimize risk. If an anomaly occurs, the agent can propose a corrective action plan, open a work order, notify a supervisor, adjust the production schedule, request parts, and recommend an inspection sequence-all based on the semantic representation of the enterprise, not a brittle tree of rules. Palantir’s AIP introduces agents that operate with an even deeper integration into operational systems. They are not siloed in analytics; they are wired directly into the machinery of the business. An AIP agent can see live telemetry, operational workflows, financial data, supply chain events, and risk signals in a single ontology. This allows it to coordinate decisions that once required multiple teams. If a shipment delay threatens a customer commitment, the agent can re-calculate production allocations, re-route inventory, propose alternative carriers, and notify account managers, ensuring that the response is both immediate and multi-dimensional. Humans validate edge cases; the agent handles the continuous flow of micro-decisions required to keep the enterprise running smoothly. IBM, with watsonx.governance, creates a safety perimeter around these agents. In industries where decisions have regulatory implications, every action must be traceable. When an agent approves a credit line increase, or suggests a clinical path, or flags a financial anomaly, it must be able to justify its reasoning. IBM ensures that the data, models, prompts, and rules that influenced the action are recorded and explainable. This transforms agents from opaque automation into transparent collaborators. NVIDIA underpins all of this by making multi-model agentic reasoning computationally feasible. Agents are not powered by a single LLM. They often use multiple models in sequence: one to interpret context, one to plan, one to verify, one to call tools, and one to summarize outcomes. Without GPU acceleration, such workflows would take seconds or minutes, far too slow for real-time operations. With accelerated inference, agents can reason continuously and interactively, enabling them to sit inside critical processes without slowing them down. All of this creates a new dynamic inside the enterprise. For the first time, the organization can offload not just tasks, but judgment. Agents do not eliminate human work. They eliminate the work humans should never have been doing in the first place-the repetitive evaluations, the constant triage, the manual reconciliations, the low-level decisions that consume cognitive bandwidth and clutter calendars. Humans remain indispensable for interpretation, escalation, creativity, ethical judgment, and cross-boundary leadership. But the constant drag of micro-decisions disappears. The enterprise becomes more fluid. Decisions that once took hours now take seconds. Signals that were previously buried in operational noise are surfaced immediately. Processes that once depended on manual stitching of information become self-coordinating. Agents become the connective tissue of the business, the actors that keep everything moving in harmony. But intelligence without coordination is not enough. A business is not merely a collection of decisions; it is a living network of processes. Those processes must flow, adapt, and respond to conditions in real time. For that, the AI Factory requires not just agents, but an adaptive nervous system. That nervous system emerges in the form of dynamic, context-aware workflows.

Chapter 5 Workflows have long been the invisible skeleton of enterprise operations, quietly holding processes together while rarely earning attention or respect. But traditional workflows were built on assumptions of stability. They reflected a world where demand patterns shifted slowly, supply chains behaved predictably, organizational boundaries were static, and exceptions were rare. In such a world, it made sense to design processes as rigid sequences of tasks, approvals, and handoffs. The goal was standardization and compliance. Variation was treated as a nuisance. That world no longer exists. Modern enterprises live inside a continuous storm of variability. Demand fluctuates in hours instead of quarters. Supply disruptions cascade unpredictably across global networks. Competitors act faster. Customers expect instant resolution. Regulations evolve rapidly. Data arrives in real time. And AI introduces new flows of insight and possibility that the old systems were never built to interpret. Rigid workflows crack under this pressure. They are too slow, too brittle, too static, and too ignorant of context. They route tasks without understanding the meaning behind them. They treat exceptions as errors rather than as inherent features of a dynamic business. The AI Factory transforms workflows from rigid diagrams into adaptive intelligences. Instead of encoding processes as fixed sequences, it treats them as living systems guided by intent. Users no longer define what should happen step by step. They define the outcome they want, and the system interprets how to achieve it based on the semantic model, the current state of the enterprise, the capabilities of agents, and the boundaries of governance. This shift turns workflows from instruments of constraint into engines of coordination. In a C3-powered environment, a workflow is created in the same semantic space where agents and LLMs operate. It inherits the meaning of objects automatically. When someone defines a process like “If a generator shows the failure signature associated with bearing degradation, create a work order, notify the shift supervisor, reprioritize maintenance tasks, and update the capacity forecast,” the platform does not treat these as disconnected instructions. It knows what a generator is, what bearing degradation implies, what work orders represent, how capacity forecasts are calculated, and who the shift supervisors are. It orchestrates the sequence not by blindly following a diagram, but by interpreting the enterprise’s digital reality. Palantir AIP extends this even further. Because workflows in AIP inherit the Ontology’s interconnected objects, they see the entire business, not just a slice of it. If a delayed shipment threatens a production schedule, the workflow perceives not only the delay but its downstream impact: which orders will be affected, which customers are impacted, which regulatory commitments apply, which alternative inventories exist, which transportation routes are available, and how the delay intersects with financial targets. AIP workflows do not move tasks. They manage situations. They bring together data, models, agents, and humans into coordinated responses that were once the product of weeks of cross-functional meetings. IBM brings another dimension: traceability. In highly regulated industries, workflows are not merely operational constructs; they are auditable processes with legal implications. Every decision, every branching condition, every exception must be recorded and explainable. Watsonx.governance ensures that adaptive workflows retain transparency without sacrificing agility. Agents can make context-aware decisions, LLMs can provide explanations, and the enterprise can remain compliant even as processes become fluid and intelligent. NVIDIA bridges the gap between intelligence and speed. Adaptive workflows often require fast reasoning loops: detect a condition, retrieve context, evaluate semantic relationships, consult models, verify outcomes, and execute actions. Doing this at scale and in real time requires accelerated infrastructure. GPU-optimized inference makes it possible to embed intelligence in the very core of process execution, not as an afterthought, but as a first-class feature. This creates workflows that feel almost alive: sensing disturbances, interpreting their significance, coordinating distributed action, and adapting to outcomes continuously. The emergence of adaptive workflows represents a deeper shift in how enterprises function. Processes stop being static artifacts frozen in diagrams. They become dynamic systems shaped by data, meaning, and intent. They respond to conditions with intelligence rather than rigidity. They collaborate with agents and humans rather than dictating steps to them. They connect abstract goals to concrete actions in ways that were once impossible. Perhaps the most important shift is cultural. In traditional systems, people must adjust their behavior to the constraints of the workflow. In the AI Factory, the workflow adjusts to the needs of the business. It becomes a partner rather than a constraint. It helps humans focus on decisions that matter rather than burdens they were never meant to carry. With workflows becoming intelligent and adaptive, the enterprise needs a new kind of interface-one that allows humans to collaborate with models, agents, and processes in a unified environment.

Chapter 6 For decades, enterprise applications were designed around the limitations of the systems beneath them. Each major domain of the business-sales, finance, supply chain, operations, HR, manufacturing-had its own system of record, its own database, its own terminology, its own constraints. Applications were built as interfaces to those systems rather than as expressions of the business itself. As a result, users learned to think in the language of software rather than in the language of work. They navigated an endless maze of screens, menus, tabs, forms, and reports that reflected data structures, not mental models. Their job was not simply to make decisions but to translate real problems into the abstractions that their IT systems could understand. The AI Factory upends this dynamic. Once the enterprise has a virtualized data layer and a unified semantic model, applications no longer need to mirror the underlying systems. They can finally mirror the business. Instead of being windows into scattered databases, they become collaborative environments where humans, agents, and models work together on shared objects with shared meaning. They do not present rows and columns; they present the conceptual fabric of the enterprise. In a C3 environment, an application built on the model-driven architecture does not treat a maintenance dashboard or a demand planning interface or an operations cockpit as a collection of widgets. It treats them as living views of the semantic types they represent. A planner looking at inventory is not seeing data pulled from multiple systems and stitched together for visualization. They are seeing the enterprise’s understanding of what inventory actually is: its origin, its relationships, its constraints, its risks, its trajectories. The interface becomes a living expression of meaning, not a static reflection of storage. Agents can act through this interface, LLMs can interpret it, and humans can navigate it without friction. The application becomes a stage for collaboration, not a window into a silo. Palantir’s approach emphasizes this even more forcefully. Foundry’s Workshop environments allow users to move fluidly across objects in the Ontology without switching systems or recontextualizing information. A logistics manager examining a delayed shipment can immediately pivot to related orders, customer commitments, regulatory obligations, or upstream production constraints-all within the same conceptual environment. They can explore the situation with LLM copilots that understand the relationships within the Ontology. They can launch workflows or trigger agentic actions directly from the same space. The application becomes a conversational, contextual workspace, not a static tool. IBM, with its emphasis on governance and traceability, ensures that the application layer maintains transparency even as it becomes more dynamic. When a model generates a recommendation or an agent proposes an action, the interface can surface the reasoning, the data lineage, the model version, and the policy context. This provides users with confidence that the intelligence embedded in the interface is accountable. The system does not simply tell them what to do; it shows them why the recommendation makes sense. NVIDIA’s contributions are subtler but equally important. Many of the experiences made possible by the AI Factory-real-time LLM interaction, multi-model agentic reasoning, high-frequency simulation, instant recalculation of complex relationships-require accelerated compute to be seamless. Without fast inference, these interactions would become sluggish, interrupting the flow of human cognition. With GPU-backed responsiveness, applications feel alive. The interface becomes a place where ideas can be explored fluidly, where hypotheses can be tested in real time, where questions can be answered instantly. It becomes an extension of the user’s mind rather than a barrier to it. The result of all this is a new kind of enterprise application: one that is no longer bound by the constraints of the underlying systems but liberated by the intelligence of the architecture. It is a place where business users can think in business terms, where complexity is rendered manageable through semantic clarity, where intelligence is embedded naturally into the workflows of daily work. Interfaces no longer force people to adapt to the limitations of the system. Instead, the system adapts to the way people think, act, and decide. This shift has far-reaching cultural implications. Teams begin to collaborate across functions without the friction of translation. Insights flow naturally across boundaries. The same semantic objects are understood by planners, analysts, operators, and executives alike. The interface becomes a unifying layer that harmonizes perspectives, aligns decisions, and accelerates coordination. It becomes the expressive front-end of the AI Factory’s intelligence. With the application layer transformed into a fluid, collaborative environment, the enterprise needs one more ingredient to achieve scale: an industrial backbone capable of powering intelligence everywhere.

Chapter 7 Every factory, no matter how elegantly designed, depends on an industrial backbone that powers its machinery. The AI Factory is no different. Beneath its semantic intelligence, its agents, its grounded LLMs, and its adaptive workflows lies an infrastructure layer that must deliver speed, reliability, and scale. Without this foundation, the architecture would collapse under its own ambitions. It would be too slow to respond in real time, too fragile to support continuous reasoning, too expensive to operate at enterprise scale. The intelligence of the AI Factory is only as strong as the computational grid that carries it. This is where NVIDIA and the hyperscalers play their defining role. They do not create the semantic model or the agents or the workflows, but they provide the power source that makes these components function in an industrial, production-grade setting. Their contribution is not conceptual but operational. They make intelligence durable. NVIDIA sits at the heart of this transformation because real-time reasoning requires computational acceleration. Traditional CPUs, no matter how optimized, cannot support the multi-model inference loops that define modern AI. A single agent may use one model to interpret context, another to plan, another to verify, another to call tools, and another to summarize. A grounded LLM may need to perform retrieval, evaluate policy constraints, run safety filters, and construct long-form reasoning paths, all within a fraction of a second. The volume of these operations grows exponentially as the enterprise transitions from occasional AI interactions to continuous, always-on intelligence. GPU-powered acceleration is the difference between an AI Factory that pauses for seconds between insights and an AI Factory that thinks continuously. NVIDIA’s NIM microservices and NeMo frameworks are designed to deliver this performance at scale. They convert LLMs, multimodal models, and agentic reasoning modules into fast, reliable services that can operate across thousands of concurrent interactions. This is not simply a matter of speed; it is a matter of viability. An AI Factory without fast inference is like a factory without electricity. The hyperscalers add elasticity and resilience. AWS, Azure, and Google Cloud provide the global compute fabric that allows the AI Factory to scale up during demand spikes and scale down during calm periods. They provide the managed services for orchestration, identity, monitoring, storage, and networking that allow the architecture to operate continuously. They also allow enterprises to deploy workloads in multiple regions, reduce latency through proximity, and comply with data residency requirements that vary by jurisdiction. More importantly, the hyperscalers create an environment where experimentation becomes affordable. Before cloud elasticity, enterprises had to size their infrastructure for peak load, making advanced compute prohibitively expensive. With the hyperscalers’ pay-as-you-go model, enterprises can run heavy simulations, train specialized models, or deploy large agentic workflows without committing to fixed capital investments. This fluidity accelerates innovation. It also ensures that the AI Factory can grow organically, expanding as the enterprise adopts new use cases without requiring wholesale reinvention of its infrastructure. The combination of hyperscale elasticity and NVIDIA acceleration gives the AI Factory its industrial-grade reliability. It ensures that grounded LLMs respond instantly, that agents reason continuously, that workflows adapt smoothly, and that applications remain interactive even during high load. It turns intelligence into an operational constant rather than a theoretical aspiration. Just as importantly, this infrastructure dissolves boundaries inside the enterprise. Teams no longer debate hardware capacity, storage limits, or provisioning timelines. They no longer ration experiments. They no longer make architectural compromises to conserve resources. The infrastructure becomes invisible, a dependable energy grid powering a factory that never sleeps. With infrastructure handled, the enterprise is finally free to confront the most transformative implication of the AI Factory: that it does not merely enable intelligence. It eliminates entire categories of cost, friction, and delay that have defined enterprise technology for decades. And in doing so, it unlocks the economic engine that funds the transition to an AI-native enterprise.

Chapter 8 The economic breakthrough of the AI Factory is often misunderstood. Many organizations assume that embracing AI at scale must involve new expenditures, new systems, new teams, and new platforms layered on top of everything that came before. They assume that transformation means additive cost. But the AI Factory does not add complexity. It replaces it. It does not create new burdens. It dismantles existing ones. It does not demand ever-growing investments in data pipelines, lakes, warehouses, and integration layers. It systematically eliminates them. To understand this, one must look honestly at where enterprise technology budgets actually go. The largest share is not spent on advanced intelligence or strategic capabilities. It is spent on the hidden tax of integration. Every extract, every pipeline, every reconciliation job, every transformation script, every half-governed dashboard, every siloed model, every competing definition of a customer or an order or a transaction imposes a cost. Not a one-time cost, but a recurring one. A cost paid year after year as teams maintain the machinery required to hold inconsistent data structures together. Data lakes were supposed to solve this. They promised a single place where all data could live, where cleanup could happen once, where access would be democratized, where pipelines would be simplified. But in practice, lakes became staging grounds for competing definitions. They housed data, but they did not unify meaning. They collected information, but they did not harmonize interpretation. They centralized storage, but they did nothing to eliminate the thousand small acts of translation performed daily by analysts, engineers, and system integrators. The tax persisted. In many cases, it grew. The AI Factory cuts through this complexity by shifting the focus from data movement to data understanding. Integration stops being a matter of moving bits and becomes a matter of defining relationships. When systems are virtualized and their meaning is expressed through a shared semantic layer, the enterprise no longer needs to duplicate data or rebuild logic. It connects once and reuses infinitely. The cost of maintaining pipelines collapses. The cost of creating them disappears. The cost of reconciling mismatched definitions evaporates. This is why enterprises that adopt the AI Factory often discover that they can fund their AI transformation with the savings generated by dismantling their legacy integration stack. They free up budgets previously locked in perpetual maintenance. They eliminate teams dedicated to reconciliation work that never produced strategic insight. They redirect resources toward creating intelligence rather than managing entropy. The implications extend far beyond cost savings. The AI Factory collapses time. Initiatives that once took months of data preparation can now begin immediately. Projects that once needed weeks of IT coordination can launch in days. Pilots that once stalled because they lacked clean data can progress because meaning is inherent in the architecture. The organization stops paying in time what it once paid in money. Even more transformative is the effect on opportunity cost. In the traditional architecture, every new AI idea competes for scarce integration resources. The pipeline team becomes the bottleneck. The data governance group becomes the gatekeeper. Important ideas die because the infrastructure cannot support them. The AI Factory removes these constraints. With meaning unified and systems virtualized, every new idea inherits the entire foundation of the architecture. A team can ask a question, prototype an idea, model a scenario, or deploy an agent without waiting for an extract to be built or a schema to be reconciled. This unlocks a new pace of innovation. Instead of working through the backlog of integration requests, teams can focus on creating value. Instead of negotiating access, they can engage with meaning. Instead of debating definitions, they can explore possibilities. Instead of spending energy on preparation, they can spend it on discovery. The economic equation shifts. AI ceases to be an investment that depends on future returns. It becomes a capability funded by the removal of past inefficiencies. The enterprise stops carrying the weight of decades of integration debt. It begins to move lightly, quickly, intelligently. This is the moment when leaders realize that the AI Factory is not simply a better architecture. It is a different economic model. It breaks the tradeoff between intelligence and cost. It replaces a world where innovation requires constant reinvention with one where innovation compounds. It transforms every dollar previously spent on maintaining complexity into a dollar that advances intelligence. With the economic foundation in place, the enterprise is finally ready to undergo the deepest transformation of all. Not a transformation of technology, but a transformation of how it operates.

Chapter 9 When all the layers of the AI Factory come together, something remarkable happens inside the enterprise. It stops functioning like a collection of departments and systems connected by human effort, and begins functioning like a coherent intelligent organism. The organization gains a kind of nervous system. It senses changes as they occur, interprets them through shared meaning, decides through agentic reasoning, acts through adaptive workflows, and learns through every interaction. This is not automation. It is not digitization. It is not a new tool. It is a new operating model. In this operating model, the friction that once defined enterprise life dissolves. Silos lose their force because the semantic layer unifies meaning across them. Delays shrink because workflows adapt to conditions rather than waiting for human routing. Complexity recedes because intelligence is embedded in the architecture rather than layered on top. What once required dozens of meetings, reconciliations, permissions, extracts, spreadsheets, dashboards, and escalations can now unfold in a fluid, coordinated rhythm. Consider demand planning. Traditionally, it was a monthly ritual performed through spreadsheets stitched together by analysts who spent more time ensuring the data aligned than interpreting what it meant. Each function had its own version of the truth. Consensus required negotiation rather than insight. And by the time the forecast was approved, reality had already shifted. In an AI Factory, demand planning becomes a continuous process. Signals flow automatically from every relevant system. Agents detect anomalies and propose adjustments. LLMs explain drivers and highlight risks. Workflows coordinate changes across functions. The organization does not wait for a meeting to adapt. It adapts as conditions change. Or consider maintenance. In legacy environments, maintenance is reactive or scheduled on fixed intervals, never fully aligned with actual asset conditions. Failures cause cascading disruptions. Preventive checks are performed too often or too late. Data is available but disconnected. In the AI Factory, maintenance becomes anticipatory. Agents monitor equipment continuously, interpret degradation patterns, connect them to production schedules, assess the impact on customer commitments, and recommend interventions before failures occur. Workflows coordinate technicians, parts, and timing. The process becomes a living cycle of observation and action rather than an episodic task. Customer operations undergo a similar transformation. What was once a fragmented maze of tickets, escalations, manual lookups, and inconsistent answers becomes a unified interaction model. An LLM coupled with the semantic layer understands the full context of a customer’s situation-orders, delays, replacements, service history, financials, obligations-and can respond with precision and coherence. Agents initiate actions without waiting for human intervention. Workflows coordinate cross-functional activities automatically. The customer perceives the organization as one entity, not a federation of disconnected departments. The cultural transformation is even deeper. People.stop spending their days on work that machines can do better. They are no longer translators between systems, reconcilers of mismatched reports, or coordinators of broken processes. Their value shifts to judgment, creativity, strategy, and leadership. They work with machines, not around them. They guide, validate, and elevate the intelligence embedded in the architecture rather than compensating for its absence. The enterprise becomes more ambitious because it can finally afford to be. It becomes faster because friction no longer governs its tempo. It becomes more cohesive because intelligence is shared rather than isolated. It becomes more resilient because agents and workflows respond to disruptions instantly rather than waiting for human attention. It becomes more innovative because experiments are no longer bounded by integration constraints. Most importantly, the AI Factory does not stifle creativity. It amplifies it. Startups integrating with the enterprise no longer confront a wall of incompatible systems and bespoke data flows. Internal innovators no longer need to negotiate for months to access the data they need. Teams no longer need to build everything from scratch. Ideas move quickly because the foundation supports them. Creativity thrives because the architecture removes the burdens that once suffocated it. The AI Factory is not simply a way to implement AI. It is the architectural and conceptual transition from a human-bottlenecked enterprise to an intelligence-enabled one. It is the shift from fragmented tooling to unified meaning, from static processes to adaptive ones, from isolated efforts to compounding capability. It is the recognition that intelligence cannot emerge from scattered systems, that speed cannot emerge from brittle pipelines, that insight cannot emerge from conflicting definitions, and that creativity cannot thrive in environments defined by friction. In this new operating model, intelligence becomes the default state of the enterprise, not an add-on. The organization becomes able to sense, interpret, decide, and act continuously. It becomes capable of learning from itself. It becomes capable of operating at the speed at which the world now moves. This is not a future aspiration. It is a present reality for the organizations that have embraced the AI Factory. And it is the path forward for every enterprise that seeks to operate without friction, without latency, and without the architectural burdens of the past. The shift is profound. It is fundamental. And it is inevitable.

- #DataVirtualization, #SemanticModels, #AIAgents, #AdaptiveWorkflows, #ZeroLatencyEnterprise, #AIFactory, #IntelligentEnterprise, #EnterpriseArchitecture, #DataStrategy