Audience and Intended Use

This document is written for Boards of Directors and Executive Committees of G2000 Global Group enterprises. It is intended to inform governance, operating model design, and capital allocation decisions related to AI, automation, robotics, and enterprise transformation.

This is not a technology implementation guide. It is an operating doctrine and governance framework.

Intellectual Property and Trademark Notice

This document contains proprietary concepts, operating frameworks, and terminology developed by the author.

Zero-Latency Decision™ is a trademarked operating framework describing continuous enterprise decision and execution capability.

The AI Factory refers to an integrated enterprise runtime for industrialized intelligence, not a specific product or vendor.

Unauthorized reproduction, distribution, or derivative use of this material beyond internal board and executive use is prohibited.

Glossary of Core Terms

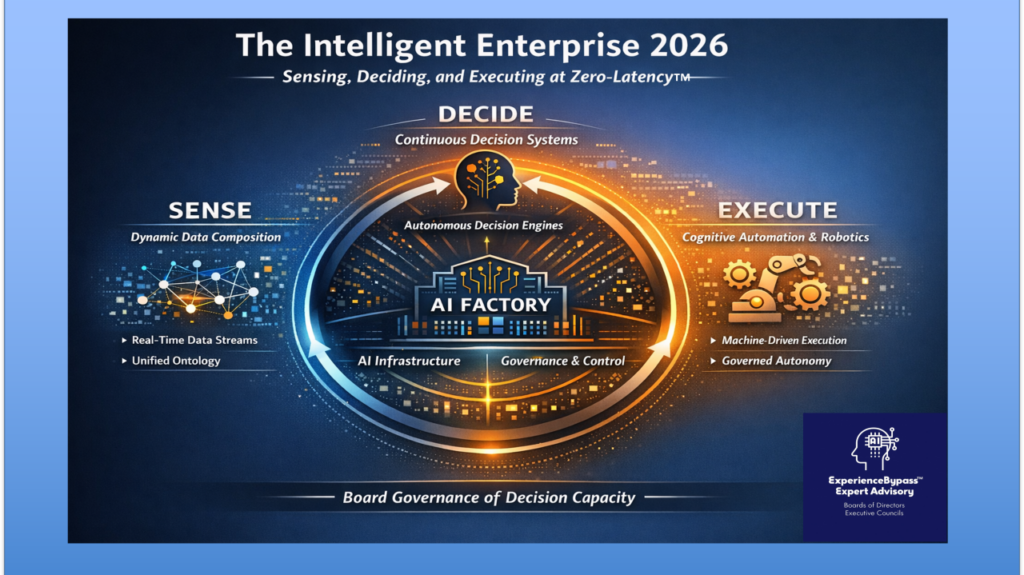

Intelligent Enterprise:

An enterprise designed to continuously sense, decide, and execute across digital and physical domains with minimal latency.

AI Factory:

The integrated enterprise technology stack that industrializes intelligence into a governed, continuous runtime.

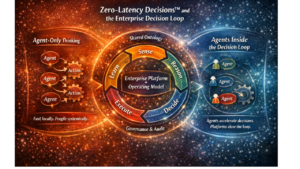

Zero-Latency Decision™:

An operating framework in which decisions are sensed, evaluated, and executed continuously without organizational or architectural delay.

Decision Stewardship:

Human ownership of intent, constraints, and outcomes in machine-executed decision systems.

Cognitive Execution:

The automated execution of decisions across digital and physical systems with feedback loops.

The Intelligent Enterprise in 2026

Operating Doctrine, Architectural Imperatives, and Board-Level Implications for the G2000 Global Group

Introduction

Why 2026 Forces the Transition to the Intelligent Enterprise

The year 2026 represents a structural inflection point in enterprise design.

Not because artificial intelligence is new.

Not because automation, robotics, or Industry 4.0 are novel.

But because, for the first time, enterprises can be designed to operate as continuously intelligent systems, rather than collections of functions coordinated by periodic management cycles.

This is the defining characteristic of the Intelligent Enterprise.

An Intelligent Enterprise is not one that deploys AI tools, analytics platforms, automation software, or robotics programs in isolation. It is one that continuously senses its environment, reasons over context, decides coherently across the enterprise, and executes at machine speed, while preserving human intent, accountability, and control.

Until recently, this was not structurally possible at scale.

What Has Changed in the Last Five Years

Between roughly 2020 and 2025, several independent capability curves crossed critical thresholds at the same time. Individually, none would have forced a fundamental redesign. Together, they rendered the traditional enterprise operating model obsolete.

Artificial intelligence became operationally dependable.

Prediction, optimization, anomaly detection, and probabilistic reasoning reached reliability levels suitable for production decisioning. Large Language Models unlocked unstructured information and enabled reasoning over language at scale. At the same time, model availability exploded and differentiation compressed.

Automation matured into an execution fabric.

Automation moved beyond task scripting into orchestration. Modern automation now coordinates end-to-end workflows, handles exceptions, enforces policy, and recovers from failure. Automation became a system of execution, not a productivity aid.

Robotics and Industry 4.0 became software-native.

Physical operations once isolated and mechanical became instrumented, networked, and software-controlled. Plants, fleets, and logistics systems are now addressable through the same control surfaces as enterprise software.

The economics of intelligence collapsed.

Compute became elastic. Models became reusable. Tooling standardized. Infrastructure commoditized.

Capabilities that once required elite teams and bespoke engineering are now theoretically accessible across the G2000, but they are not yet coherently adopted. Access to powerful AI, automation, and analytics primitives is now widespread, but the architectural ability to operate them as a unified enterprise capability remains uneven and incomplete.

In practice, most G2000 enterprises are still attempting to assemble intelligence from general-purpose cloud tools, stitching together services from hyperscalers and adjacent ecosystems. While these platforms provide critical primitives, they do not, on their own, constitute an Intelligent Enterprise architecture. The result is a landscape of fragmented deployments: models without runtime coherence, automation without decision integration, and analytics without execution authority. Intelligence exists, but it does not operate as a system.

Intelligence Has Been Commoditized and Is Now Universally Accessible

A defining shift now underpins everything that follows: intelligence itself has been commoditized.

Advanced AI capabilities are no longer scarce, proprietary, or elite. High-quality models, elastic compute, and standardized tooling mean that any enterprise, at any time, can access powerful prediction, optimization, and language intelligence.

This is not a future condition.

It is the baseline reality of 2026.

As a result, having intelligence no longer confers advantage. Competitive advantage shifts entirely to how intelligence is architected, governed, and embedded into decision flow and execution inside the enterprise.

From Scarcity to Abundance Changes the Rules of Competition

When intelligence was scarce, enterprises competed on access.

When intelligence becomes abundant, enterprises compete on coherence, speed, and reliability.

In an environment where intelligence is universally accessible, latency becomes visible, fragmentation becomes costly, and incoherence becomes fatal. Enterprises no longer lose because they lack insight. They lose because they cannot act coherently and fast enough on insight that everyone has.

This is the context in which the Intelligent Enterprise becomes mandatory rather than aspirational.

The New Constraint: Organizational Latency

As sensing, reasoning, and execution become cheap and fast, the dominant constraint moves.

The constraint is no longer technology.

The constraint is organizational latency.

Latency now comes from functional handoffs, committee approvals, data movement assumptions, human-in-the-loop defaults, and governance models designed for a slower era.

Enterprises increasingly fail not because they lack insight, but because they cannot act coherently and fast enough on insight that everyone has.

The Missing Piece: A New Enterprise Technology Stack

Operating as an Intelligent Enterprise is not possible on traditional application, data, or analytics stacks.

What makes the Intelligent Enterprise operable is the emergence of a new enterprise technology stack, purpose-built for continuous decisioning and execution. This stack is fundamentally different from application delivery architectures, analytics platforms, or traditional MLOps toolchains.

This stack exists to support continuous sensing, reasoning, and action, not periodic analysis.

This stack is the AI Factory.

The AI Factory as the Enabling Substrate of the Intelligent Enterprise

The AI Factory is not a product, a platform, or a team. It is the integrated technology substrate that allows intelligence to operate as a production capability.

At its core, the AI Factory provides:

· A runtime for continuous decision systems

· Dynamic data virtualization and composition

· Orchestration of GenAI and non-GenAI models

· Embedded governance, security, and audit

· Native integration with automation and robotics

In a world where intelligence is commoditized, the AI Factory exists to re-industrialize intelligence: to make abundant capability coherent, safe, economical, and scalable.

Without an AI Factory, commoditized intelligence fragments the enterprise.

With it, intelligence becomes infrastructure.

Zero-Latency Decision™ as the Operating Mechanism

Within the Intelligent Enterprise, the Zero-Latency Decision™ framework defines how the enterprise operates.

Zero-Latency Decision™ refers to the enterprise’s ability to sense relevant signals, contextualize them dynamically using enterprise semantics, decide coherently across domains, and execute immediately through automation and robotics, without organizational or architectural delay.

The AI Factory makes this technically possible.

Zero-Latency Decision™ makes it operationally real.

The Structural Data Problem the AI Factory Solves

The commoditization of intelligence exposes a second, less visible failure mode: data fragmentation at the moment of decision.

In the absence of an AI Factory, enterprises are forced to assemble decision context manually or through brittle pipelines, pre-integrating data in anticipation of future use cases. This reintroduces latency, duplicates logic, and hard-codes assumptions about how data will be used.

Without a factory, data is forced into one of two failure states. It either remains siloed and inaccessible at decision time, or it is prematurely centralized into generalized repositories that cannot reflect real-time operational context. In both cases, the enterprise loses the ability to support Zero-Latency Decision™ without sacrificing coherence or control.

The AI Factory resolves this structural data problem by enabling dynamic data virtualization and composition at decision time, guided by enterprise ontology and policy. Distributed data remains distributed. Context is assembled only when required and only for the specific decision being executed.

Without an AI Factory, enterprises are forced to choose between speed and coherence.

With it, they can achieve both.

Standardization at the Stack, Personalization at the Decision

This transition creates a paradox that confuses many leadership teams.

On one side, AI factories converge, execution patterns standardize, governance models harden, and tooling differentiation collapses. On the other, decisions become more contextual, execution becomes more localized, and personalization increases.

The Intelligent Enterprise resolves this paradox by standardizing the AI Factory stack while personalizing decision logic and execution within defined constraints.

Enterprises that attempt to personalize the stack and standardize outcomes fail. Enterprises that standardize the stack and personalize decisions scale.

Why This Forces a Full Operating Redesign

The Intelligent Enterprise cannot be achieved by layering AI onto an operating model designed for episodic management.

Organizations built around periodic planning, functional silos, manual approvals, broad data centralization, and episodic execution cannot exploit commoditized intelligence, the AI Factory, or Zero-Latency Decision™.

This is why many enterprises experience the same frustration: they have models, insights, and automation, yet nothing fundamentally changes. These are not technology failures. They are architecture and operating design failures.

What This Document Establishes

This document defines the structural design of the Intelligent Enterprise, grounded in commoditized intelligence as the forcing condition, the AI Factory as the enabling technology stack, and Zero-Latency Decision™ as the operating mechanism.

The predictions that follow are not trends. They are structural consequences of operating as an Intelligent Enterprise in a world where intelligence is abundant and execution is automated.

Prediction 1

The Intelligent Enterprise Operates Through Continuous Decision Systems

Decision Cycles Collapse Permanently

By 2026, enterprises that operate as Intelligent Enterprises will no longer rely on episodic decision cycles as their primary mechanism for action.

Monthly operating reviews, quarterly planning decisions, annual budget cycles, and committee-driven approvals will cease to function as execution mechanisms. They may continue to exist as governance and alignment forums, but they will no longer be the point at which material enterprise decisions are made.

Instead, decisions will operate as continuous systems.

In the Intelligent Enterprise, a decision is not an event. It is a persistent capability. It exists as a long-lived system that continuously senses relevant signals, evaluates context, applies logic and constraints, and triggers execution without waiting for human synchronization.

Pricing, allocation, risk thresholds, fraud response, capacity balancing, supply reconfiguration, and customer prioritization will no longer “happen” at moments in time. They will run continuously.

This change is foundational. It alters not just speed, but the nature of management, accountability, and control.

Why This Is Inevitable

This transition is not driven by managerial preference, organizational fashion, or digital ambition. It is driven by a structural mismatch between enterprise operating models and the underlying economics of intelligence and execution.

Technology now senses continuously. Technology now reasons continuously. Technology now executes continuously.

What remains episodic is the enterprise itself.

As intelligence becomes commoditized and execution becomes automated, episodic decision-making introduces latency that can no longer be absorbed or hidden. In prior eras, slow decision cycles were masked by slow execution. In 2026, delay is exposed immediately as lost opportunity, increased volatility, customer dissatisfaction, or unmanaged risk.

In a Zero-Latency Decision™ environment, the time between signal and action becomes the dominant economic variable. Even small delays compound negatively when decisions execute thousands or millions of times per year.

Episodic decision cycles cannot compete with continuous decision systems on this axis. They are structurally outmatched.

How It Shows Up in Practice

In Intelligent Enterprises that have made this transition:

Decisions are implemented as long-lived decision systems with clearly defined ownership, scope, constraints, and escalation logic. These systems are funded, governed, and measured as enterprise capabilities, not projects.

Signals and events trigger evaluation immediately rather than waiting for calendar-based checkpoints. Decision logic is always on, always listening, and always ready to act within defined boundaries.

Human forums shift decisively upstream. Management meetings focus on defining intent, adjusting constraints, resolving trade-offs, and reviewing systemic performance rather than making routine operational decisions.

Decision performance is monitored continuously. Latency, stability, variance, economic impact, and exception rates become explicit management metrics rather than anecdotal observations.

Learning occurs continuously. Decision systems adapt based on outcomes rather than waiting for periodic redesigns or post-mortems.

Management attention moves from execution to architecture. Execution moves from humans to machines.

Board Red Flags

Boards should be concerned when decision-making is still described primarily in terms of cadence, forums, and approvals rather than as systems with defined behavior. Language such as “we review this monthly,” “we decide quarterly,” or “we bring this to committee” signals that decisions remain episodic events rather than operational capabilities.

Another structural red flag appears when responsiveness is treated as situational rather than designed. If leadership explains speed as something that improves during crises or requires extraordinary effort, continuous decision systems do not exist. In the Intelligent Enterprise, speed is not a mode. It is a baseline property of the system.

Boards should also listen carefully for a disconnect between insight and execution. When analytics, dashboards, and models improve but execution timing does not change materially, the organization is still operating episodically. In a Zero-Latency Decision™ environment, insight without immediate action rapidly loses value.

A particularly dangerous pattern emerges when automation is introduced without redesigning decision cadence. Episodic decisions feeding automated execution increase volatility rather than reducing it. Automation faithfully amplifies whatever decision logic it receives. If that logic is slow, inconsistent, or outdated, automation accelerates failure.

Finally, boards should probe whether decision latency is measured explicitly for the enterprise’s most material decisions. If leadership cannot quantify how long it takes for a signal to become action, latency is unmanaged. In a world where intelligence is commoditized and execution is automated, unmanaged latency is not a minor inefficiency. It is a structural competitive disadvantage.

Prediction 2

Decision Architecture Replaces Functional Coordination

The Enterprise Organizes Around Decisions, Not Org Charts

By 2026, Intelligent Enterprises will no longer rely on functional hierarchies as their primary coordination mechanism.

Functions will continue to exist, but they will cease to be the organizing unit through which material enterprise outcomes are produced.

Instead, the enterprise will be organized around an explicit decision architecture.

Strategy will be expressed as a small number of economically material decisions that must operate continuously and coherently across the organization.

Functions become contributors to decisions. Decisions become the unit of execution.

This shift fundamentally changes how accountability, funding, and authority operate inside the enterprise.

Why This Is Inevitable

Functional coordination evolved in an era where decision speed was limited by human communication and manual execution. In that context, optimizing functions locally and reconciling outcomes later was viable.

That model breaks down under Zero-Latency Decision™ conditions.

As intelligence becomes commoditized and execution becomes automated, decisions propagate instantly across systems. Any inconsistency in logic, priority, or interpretation is amplified at machine speed.

Function-by-function intelligence deployment therefore creates contradictions faster than organizations can reconcile them.

Decision architecture is the only construct that can preserve coherence when decisions must execute continuously across domains.

Without it, enterprises do not merely become inefficient. They become internally adversarial at machine speed.

How It Shows Up in Practice

In Intelligent Enterprises with mature decision architecture:

The enterprise maintains an explicit inventory of its most economically and operationally material decisions.

Each decision has a named owner with authority over logic, constraints, and outcomes.

Funding is allocated to decision systems rather than to isolated functional initiatives.

Decision logic is reused across channels, regions, and business units.

Conflicts are resolved at the decision level rather than escalated through functional hierarchy.

Strategy discussions move away from organizational structure and toward decision coverage, decision performance, and decision risk.

Board Red Flags

Boards should be concerned when accountability for outcomes is still framed primarily in functional terms. Statements such as “marketing owns pricing,” “operations owns fulfillment,” or “IT owns automation” often indicate that decisions cut across functions without a single point of ownership. In a Zero-Latency Decision™ environment, this ambiguity leads to fragmented execution and silent failure.

Another structural red flag appears when enterprises pursue AI or automation initiatives function by function without a unifying decision framework. Boards may hear progress reports that sound positive in isolation, yet outcomes remain inconsistent across the enterprise. This usually signals that intelligence is being deployed vertically while decisions operate horizontally, a mismatch that cannot be corrected through coordination alone.

Boards should also be alert when committees are positioned as decision owners. Committees can provide oversight, but they cannot operate continuous decision systems. When material decisions depend on committee consensus to execute, latency and dilution are guaranteed. In Intelligent Enterprises, committees set constraints. They do not run decisions.

A particularly subtle warning sign emerges when funding follows organizational boundaries rather than decision impact. If multiple functions are independently investing in capabilities that influence the same decision, duplication and contradiction are already embedded. This often surfaces later as integration cost, governance conflict, or inconsistent customer and operational outcomes.

Finally, boards should probe whether leadership can clearly articulate the enterprise’s most critical decisions and explain how they operate end to end. If executives struggle to describe how a decision senses signals, applies logic, and triggers execution across functions, then decision architecture does not exist in practice. In that case, the organization is still relying on functional coordination in a world that now punishes it.

Prediction 3

Humans Become Stewards of Decision Systems

The Role of People Is Redefined in the Intelligent Enterprise

By 2026, Intelligent Enterprises will no longer rely on humans as the primary executors of high-frequency, repeatable decisions.

Instead, people will be repositioned as stewards of decision systems.

Human responsibility shifts from making routine decisions to defining intent, objectives, constraints, escalation logic, and risk tolerance.

Decisions that must operate continuously will be executed by machines within these boundaries.

Humans remain accountable, but they no longer act as the bottleneck.

This is not a reduction in human importance. It is a reallocation of human judgment to where it actually matters.

Why This Is Inevitable

As intelligence becomes commoditized and execution becomes automated, the economic value of human involvement in routine decisions collapses.

Humans are slower, inconsistent under load, and expensive relative to automated execution.

At the same time, the value of human judgment in ambiguity, ethical trade-offs, risk interpretation, system design, and accountability increases sharply.

In a Zero-Latency Decision™ environment, keeping humans in the loop for execution destroys speed and coherence.

Removing humans entirely destroys trust and control.

The only stable equilibrium is human stewardship over machine execution.

Enterprises that fail to redesign roles accordingly will oscillate between over-automation and manual override, never achieving scale or reliability.

How It Shows Up in Practice

In Intelligent Enterprises:

Decision ownership becomes a formal role, distinct from functional management.

Humans set decision policy, guardrails, and escalation thresholds.

Automation executes within defined boundaries and routes exceptions upward.

Performance reviews focus on outcome stability and system improvement, not activity volume.

Training shifts from tool proficiency to systems thinking, judgment, and risk management.

Management time moves upstream. Firefighting declines. Trust in automated execution increases over time.

Board Red Flags

Boards should be concerned when leadership frames the human role in AI primarily in terms of productivity gains or headcount reduction. This narrative almost always signals that stewardship, governance, and accountability have been under-designed.

Another structural red flag appears when no one can clearly explain who owns an outcome once a decision is automated. Statements such as “the system decided” or “the model did it” indicate a breakdown in accountability. In an Intelligent Enterprise, machines execute decisions, but humans always own outcomes.

Boards should also watch for signs that humans remain embedded in execution by default. If automation exists but humans still approve, reconcile, or manually intervene in the majority of cases, decision latency has merely been hidden, not removed.

A particularly dangerous pattern emerges when exception handling is informal or ad hoc. If escalation paths are unclear or depend on individual judgment rather than designed thresholds, the enterprise becomes vulnerable under stress.

Finally, boards should probe how leadership is preparing the workforce for stewardship roles. If training, incentives, and career paths still reward manual coordination and activity rather than system design and outcome quality, the enterprise will struggle to retain trust and talent.

Prediction 4

Data Architecture Is Rebuilt Around Decisions

Broad Centralization Collapses; Dynamic Composition Takes Over

By 2026, Intelligent Enterprises will no longer pursue broad enterprise data centralization as a prerequisite for intelligence or automation.

Large, multi-year data unification programs designed to create a single, comprehensive enterprise data layer will largely be abandoned or materially scaled back.

Selective centralization will persist where it is structurally unavoidable, most notably for core ERP, financial, and regulatory reporting data.

Outside of these narrow domains, data will remain distributed and will be accessed, combined, and contextualized dynamically at the moment decisions are made.

This marks a decisive break from decades of data strategy built around pre-integration and anticipation of future use cases.

In the Intelligent Enterprise, data architecture is designed around decisions, not repositories.

Why This Is Inevitable

Traditional data centralization strategies were built for an era of slow analytics, batch processing, and human-mediated decision-making.

In that context, pre-integrating data and reconciling meaning in advance was the only way to make analysis possible.

That logic collapses in a Zero-Latency Decision™ environment.

When decisions must execute continuously, waiting for data to be centralized, cleaned, and modeled in advance introduces unacceptable latency.

Equally problematic, broad centralization hard-codes assumptions about how data will be used, which decisions will matter, and which relationships are relevant.

As decision logic evolves, centralized schemas become brittle constraints rather than enablers.

The result is a false trade-off between speed and coherence that Intelligent Enterprises can no longer accept.

How It Shows Up in Practice

In Intelligent Enterprises that have rebuilt their data architecture:

Core transactional and regulatory data is centralized only where required for control, compliance, and financial integrity.

All other operational, contextual, and external data remains distributed in source systems or domain-aligned platforms.

Data is virtualized and composed dynamically at decision time based on the specific needs of the decision being executed.

Enterprise ontology governs meaning, relationships, and permissible combinations of data in real time.

Data pipelines are replaced or augmented by decision-time composition and semantic mediation.

The enterprise gains both speed and coherence without forcing premature unification.

Board Red Flags

Boards should be concerned when data strategy is still framed primarily in terms of enterprise-wide unification or the creation of a single, all-purpose data platform. While such initiatives may sound comprehensive, they often embed years of delay and lock the organization into outdated assumptions.

Another red flag appears when value realization is dependent on future phases of data integration. Statements such as “once the data is fully integrated” or “after the next wave of ingestion” indicate that decision latency has been institutionalized.

Boards should also question narratives that frame data quality issues as purely technical problems. In many cases, recurring data quality failures are actually semantic failures caused by the absence of an enterprise ontology and decision-centric context.

A particularly subtle warning sign emerges when leaders describe a trade-off between speed and control. This usually indicates that data architecture is doing work that decision systems and governance should be handling.

Finally, boards should probe whether leadership can explain how data is assembled at the moment a decision is executed. If executives cannot articulate how distributed data is safely and coherently composed in real time, the enterprise is likely still operating on a centralized, latency-prone model that cannot support Intelligent Enterprise ambitions.

Prediction 5

Enterprise Ontology Becomes the Semantic Control Plane

Meaning Is Governed Explicitly Across Decisions, Data, and Execution

By 2026, Intelligent Enterprises will treat enterprise ontology as a first-class asset rather than a technical afterthought.

Ontology will become the semantic control plane that governs meaning consistently across data, decision logic, automation, and robotics.

This shift moves meaning out of code, spreadsheets, and tribal knowledge and into an explicit, governed enterprise construct.

In a Zero-Latency Decision™ environment, unmanaged meaning becomes a systemic risk.

Ontology is the mechanism through which coherence is preserved while speed increases.

Why This Is Inevitable

As data becomes distributed and dynamically composed at decision time, implicit assumptions about meaning can no longer be tolerated.

When meaning is embedded locally in pipelines, dashboards, models, or automation logic, inconsistencies propagate instantly at machine speed.

Historically, enterprises relied on slow reconciliation and human judgment to resolve semantic differences.

That safety net disappears when decisions execute continuously.

Without an explicit ontology, the enterprise loses the ability to reason coherently about its own operations.

Ontology is therefore not an academic exercise. It is a prerequisite for scalable autonomy.

How It Shows Up in Practice

In Intelligent Enterprises with a mature ontology layer:

Core business concepts, relationships, constraints, and hierarchies are explicitly defined and governed.

Ontology is tied directly to decision domains rather than generic data models.

Dynamic data composition is mediated through ontology at decision time.

Automation and robotics consume ontology to ensure consistent interpretation of state and intent.

Changes in meaning propagate systematically rather than through manual rework.

The enterprise can move faster without fragmenting understanding.

Board Red Flags

Boards should be concerned when semantic consistency is assumed rather than governed. Phrases such as “everyone knows what that means” or “we standardize locally” usually signal that meaning is embedded informally and will fracture under scale.

Another red flag appears when data quality issues recur despite repeated remediation efforts. In many cases, the underlying problem is not data accuracy but semantic disagreement caused by the absence of a shared ontology.

Boards should also be cautious when ontology is positioned purely as a data or IT initiative. When meaning governance is divorced from decision ownership, it loses authority and relevance.

A particularly dangerous pattern emerges when automation or AI systems interpret the same concept differently across domains. These failures often appear as inexplicable anomalies but are rooted in semantic incoherence.

Finally, boards should probe whether leadership can explain how meaning is governed as decisions execute autonomously. If ontology is not part of the decision runtime, the enterprise is operating without semantic control at precisely the moment it matters most.

Prediction 6

The AI Factory Becomes the Runtime of the Intelligent Enterprise

Intelligence Is Industrialized Into a Governed, Continuous Runtime

By 2026, Intelligent Enterprises will no longer treat artificial intelligence as a collection of projects, tools, or isolated use cases.

Instead, intelligence will be operated through an AI Factory that functions as the runtime of the enterprise.

The AI Factory becomes the system of record for enterprise intelligence, governing how models, data, decisions, automation, and execution interact.

This shift marks the transition from experimentation with intelligence to the industrialization of intelligence.

In a world where intelligence is commoditized, the AI Factory is what restores coherence, control, and economic leverage.

Why This Is Inevitable

As access to powerful models becomes universal, differentiation no longer comes from model ownership or algorithmic novelty.

Without a unifying runtime, enterprises accumulate fragmented intelligence assets that are expensive to operate, difficult to govern, and risky to scale.

Project-based AI delivery produces duplicated pipelines, inconsistent assumptions, and unmanaged cost and risk.

This fragmentation is tolerable when intelligence is scarce. It becomes catastrophic when intelligence is abundant and execution is automated.

The AI Factory is therefore not an architectural preference. It is a structural necessity for operating intelligence at scale.

How It Shows Up in Practice

In Intelligent Enterprises that have built an AI Factory:

Models are deployed, monitored, governed, and retired through a common runtime.

GenAI and non-GenAI models are orchestrated together rather than managed in separate stacks.

Dynamic data virtualization and ontology-driven composition are native capabilities.

Governance, security, auditability, and cost controls are embedded in the runtime rather than bolted on.

Automation and robotics are directly integrated as execution targets.

Intelligence behaves as infrastructure rather than as a series of bespoke implementations.

Board Red Flags

Boards should be concerned when AI progress is reported primarily in terms of the number of use cases, pilots, or deployed models. This framing often hides deep fragmentation and masks the absence of an operating runtime.

Another red flag appears when AI costs are unpredictable or difficult to attribute to business outcomes. This usually indicates that intelligence is being assembled ad hoc rather than operated through a governed factory.

Boards should also question architectures where governance, security, or compliance are layered outside the AI execution path. In such designs, controls inevitably lag behavior and fail under scale.

A particularly dangerous pattern emerges when GenAI systems are allowed to operate independently of the enterprise AI Factory. This creates ungoverned decision channels and reintroduces data and execution risk.

Finally, boards should probe whether leadership can explain how intelligence flows from data to decision to execution within a single runtime. If that flow cannot be articulated end to end, the AI Factory does not yet exist in practice.

Prediction 7

LLMs Become Managed Subsystems of the AI Factory

Language Intelligence Is Governed, Not Free-Running

By 2026, Intelligent Enterprises will no longer deploy Large Language Models as independent tools, copilots, or isolated productivity aids.

LLMs will instead operate as managed subsystems within the enterprise AI Factory.

Language intelligence will be embedded inside decision systems, workflows, and execution paths rather than sitting outside them.

This shift reflects a broader realization that ungoverned language intelligence creates systemic risk when scaled across the enterprise.

In the Intelligent Enterprise, LLMs augment reasoning and interaction, but they do not bypass architecture, governance, or accountability.

Why This Is Inevitable

Language is uniquely powerful because it collapses data access, reasoning, instruction, and authority into a single interface.

When LLMs are deployed outside a governed runtime, they effectively become shadow decision systems.

They can access sensitive data, influence decisions, and trigger actions without consistent controls.

As LLM usage scales, this creates fragmentation, cost volatility, compliance exposure, and erosion of accountability.

Enterprises that attempt to govern LLMs purely through policy or usage guidelines will fail.

Only architectural containment within the AI Factory can scale safely.

How It Shows Up in Practice

In Intelligent Enterprises that have integrated LLMs correctly:

LLMs are accessed exclusively through AI Factory interfaces.

All prompts, responses, and actions are logged, auditable, and policy-governed.

LLMs operate within explicit decision context rather than answering in isolation.

Ontology constrains interpretation and grounding.

LLMs assist with explanation, interpretation, synthesis, and interaction rather than acting as decision authorities.

Execution triggered by language is mediated through decision systems and automation layers.

Board Red Flags

Boards should be concerned when LLM adoption is characterized primarily by tool proliferation. A landscape of disconnected copilots and chat interfaces almost always signals loss of control.

Another red flag appears when LLM outputs are treated as authoritative or self-validating. Statements such as “the model said so” indicate that accountability has been displaced rather than strengthened.

Boards should also watch for unexplained volatility in AI costs. Ungoverned LLM usage often leads to rapid and unpredictable consumption of compute resources.

A particularly dangerous pattern emerges when LLMs are allowed to trigger actions directly, bypassing decision logic, policy enforcement, or automation controls. This creates unmonitored execution paths that can amplify errors at scale.

Finally, boards should probe whether leadership can explain how LLM behavior is constrained, monitored, and integrated into the enterprise decision runtime. If language intelligence operates outside the AI Factory, the enterprise is accumulating hidden risk.

Prediction 8

Value Shifts from Generative AI to Operational Intelligence

Execution-Centered Intelligence Dominates Enterprise Economics

By 2026, the majority of measurable enterprise value from AI will come from non-generative and hybrid intelligence embedded directly into operational execution.

Generative AI will remain important, but it will no longer be the primary driver of sustained economic advantage.

Instead, value will accrue to enterprises that embed prediction, optimization, and control logic directly into high-frequency operational decisions.

Operational intelligence compounds value because it executes continuously rather than episodically.

The Intelligent Enterprise prioritizes intelligence that changes outcomes, not intelligence that merely explains them.

Why This Is Inevitable

Generative AI excels at interpretation, synthesis, and interaction, but its economic impact is often indirect.

By contrast, operational intelligence directly influences cost, revenue, risk, and service levels at scale.

As intelligence becomes commoditized, explanatory capability alone does not differentiate.

What differentiates is the ability to repeatedly make better decisions faster and execute them automatically.

High-frequency operational decisions compound advantage thousands or millions of times per year.

This compounding effect overwhelms the episodic value created by most generative applications.

How It Shows Up in Practice

In Intelligent Enterprises that focus on operational intelligence:

AI investments concentrate on decisions embedded in core processes such as pricing, allocation, routing, inventory, credit, fraud, and capacity management.

Non-GenAI models and optimization engines operate continuously within the AI Factory runtime.

Generative AI is layered on top to provide explanation, insight, and interaction rather than primary control.

Use cases are explicitly segmented by decision frequency, risk profile, and execution modality.

Different classes of decisions are implemented in different architectural locations based on these characteristics.

The enterprise avoids forcing all intelligence into a single interaction paradigm.

Board Red Flags

Boards should be concerned when AI portfolios are dominated by generative use cases with unclear linkage to operational outcomes. While such initiatives may demonstrate capability, they rarely compound value at scale.

Another red flag appears when operational processes remain largely manual while generative tools proliferate. This inversion indicates misaligned priorities and underinvestment in execution.

Boards should also question narratives that treat all AI use cases as architecturally equivalent. In reality, high-frequency operational decisions demand fundamentally different controls than low-risk informational interactions.

A particularly dangerous pattern emerges when generative systems are positioned as decision authorities in operational contexts. This often reflects a misunderstanding of risk, control, and economic leverage.

Finally, boards should probe whether leadership can articulate where different classes of AI use cases live within the enterprise architecture. If all intelligence is treated as interchangeable, value and control will erode together.

Prediction 9

Automation and Robotics Converge into Cognitive Execution Systems

Decisions Flow Directly into Digital and Physical Action

By 2026, Intelligent Enterprises will no longer treat automation and robotics as separate domains with distinct governance, tooling, and operating models.

Automation and robotics will converge into unified cognitive execution systems that consume decisions directly from the AI Factory runtime.

Execution will no longer be a downstream activity triggered by humans. It will be the immediate continuation of decision logic.

This convergence completes the transition from insight-driven organizations to execution-driven enterprises.

Without cognitive execution, Zero-Latency Decision™ remains theoretical.

Why This Is Inevitable

Automation matured first in digital domains, while robotics evolved largely in physical environments.

Historically, these domains were separated by tooling, skill sets, and organizational boundaries.

As decisions become continuous and autonomous, this separation becomes untenable.

A decision that cannot execute immediately across both digital and physical systems introduces artificial latency.

Maintaining separate execution stacks forces translation layers, manual handoffs, and duplicated logic.

The Intelligent Enterprise collapses this boundary by treating execution as a single, decision-driven fabric.

How It Shows Up in Practice

In Intelligent Enterprises with converged execution systems:

Decisions emitted by the AI Factory trigger both digital automation and physical robotic action.

Workflow orchestration spans software, machinery, fleets, and facilities.

Exception handling is designed into execution flows rather than managed informally.

Telemetry from execution feeds back into decision systems continuously.

Learning loops connect outcomes to future decision logic.

The enterprise operates as a closed-loop system across digital and physical domains.

Board Red Flags

Boards should be concerned when automation and robotics are governed as separate initiatives with different success metrics and control structures. This separation almost always introduces latency and inconsistency.

Another red flag appears when execution remains heavily manual despite advanced decision and analytics capabilities. This indicates that intelligence is being produced faster than the enterprise can act on it.

Boards should also question narratives that frame automation purely as a cost-reduction lever. In Intelligent Enterprises, execution quality, speed, and reliability matter as much as efficiency.

A particularly dangerous pattern emerges when exception handling is left to frontline improvisation. Under scale and stress, informal execution collapses.

Finally, boards should probe whether leadership can explain how execution outcomes are measured, learned from, and fed back into decision systems. If execution does not close the loop, the enterprise cannot improve autonomously.

Prediction 10

Boards Become Stewards of Decision Capacity and Machine-Executed Risk

Governance Shifts from Outcomes to Systemic Decision Capability

By 2026, Boards of Intelligent Enterprises will expand their governance remit beyond strategy approval and outcome oversight to include stewardship of enterprise decision capacity.

This does not mean boards become technologists or operators. It means they explicitly govern how decisions are made, executed, and controlled when machines operate at scale.

As Zero-Latency Decision™ and cognitive execution become structural, risk moves upstream into architecture, policy, and system design.

Boards remain accountable regardless of delegation. What changes is where accountability must be exercised.

In the Intelligent Enterprise, boards do not govern AI. They govern decision systems.

Why This Is Inevitable

Traditional governance assumes that humans make decisions, systems support execution, and accountability is localized.

That assumption no longer holds when decisions are executed continuously by machines.

In Intelligent Enterprises, failure modes are systemic rather than isolated.

Risk propagates through interconnected decision systems at machine speed.

By the time outcomes appear in financial, operational, or compliance reports, the underlying causes are already embedded.

Boards that do not shift upstream will find themselves governing consequences rather than causes.

How It Shows Up in Practice

In Intelligent Enterprises with mature governance:

Boards receive visibility into decision latency, autonomy levels, and exception rates.

Autonomous decision scope is explicitly defined, reviewed, and adjusted over time.

Dependency and concentration risk across AI Factory components is monitored.

Governance discussions focus on systemic behavior rather than isolated incidents.

Executive incentives are aligned to decision performance, stability, and resilience.

Board agendas evolve from periodic AI updates to continuous oversight of decision capacity.

Board Red Flags

Boards should be deeply concerned when AI, automation, or digital intelligence appear only as periodic agenda items rather than as standing governance topics. This often signals that intelligence is still viewed as a set of initiatives rather than as a core operating capability.

Another structural red flag appears when oversight focuses exclusively on ethics statements, principles, or compliance checklists without corresponding discussion of operational control. Ethical intent without architectural enforcement creates a false sense of safety.

Boards should also watch for a mismatch between autonomy and visibility. If leadership cannot clearly articulate which decisions are executed autonomously, under what constraints, and with what escalation logic, governance is occurring after the fact.

A particularly dangerous pattern emerges when decision failures are treated as isolated errors rather than symptoms of system design. In Intelligent Enterprises, most failures are systemic, not personal.

Finally, boards should probe whether they are being asked the right questions. If management reports outcomes without discussing decision capacity, latency, and coherence, the board is governing results rather than resilience.

Conclusion

The Intelligent Enterprise Is No Longer Optional

2026 as the Point of No Return

The transition described in this document is not an optimization cycle, a digital transformation phase, or an AI adoption wave. It is a structural shift in how enterprises exist, compete, and govern themselves.

By 2026, the conditions that once allowed enterprises to delay fundamental operating redesign no longer hold. Intelligence has been commoditized. Execution has been automated. Speed has become visible. Latency has become measurable. Fragmentation has become expensive. In this environment, incrementalism is no longer neutral. It is a strategic liability.

The Intelligent Enterprise is not defined by the presence of AI. It is defined by the absence of delay between sensing, deciding, and acting, and by the ability to do so coherently, safely, and repeatedly at scale.

Many enterprises will believe they are progressing because they are deploying more AI, more automation, and more advanced tools. Most will not be.

The decisive factor is not tool adoption. It is whether the enterprise has redesigned itself around continuous decision systems, decision architecture, stewardship, dynamic data composition, enterprise ontology, the AI Factory, cognitive execution, and governance of decision capacity.

Enterprises that do not make these shifts will still appear modern. They will still demonstrate pilots, dashboards, copilots, and use cases. What they will lack is structural coherence.

A recurring failure pattern among large enterprises is the belief that this transition is elective or deferrable. It is not.

Once competitors operate with Zero-Latency Decision™, continuous execution, and AI Factories as runtime infrastructure, they compress response times, reduce variance, and compound advantage in ways that episodic enterprises cannot match.

As decision-making becomes automated and execution becomes machine-driven, accountability does not diminish. It intensifies.

Risk no longer sits primarily in individual decisions. It sits in architecture, decision scope definitions, autonomy boundaries, ontology governance, exception design, and runtime controls.

Boards and Executive Committees that continue to govern outcomes without governing decision capacity will consistently arrive too late.

The paradox facing G2000 enterprises in 2026 is that intelligence is abundant, but advantage is scarce; capability is accessible, but coherence is rare; automation is powerful, but control is fragile.

The Intelligent Enterprise is not a future vision. It is an operating requirement that has already arrived.

The question for 2026 is not whether enterprises will deploy AI, automation, or robotics. They all will.

The question is whether they will operate them as fragmented tools or govern them as an integrated decision and execution system.