t in building the logic that differentiates its business

The CLEARED AI Framework™ provides the design method and roadmap for this logic layer.

The AI-ENABLED Enterprise Index™ provides the measurement and progress.

Together, they form the foundation of the modern operating model that enables Zero-Latency performance.

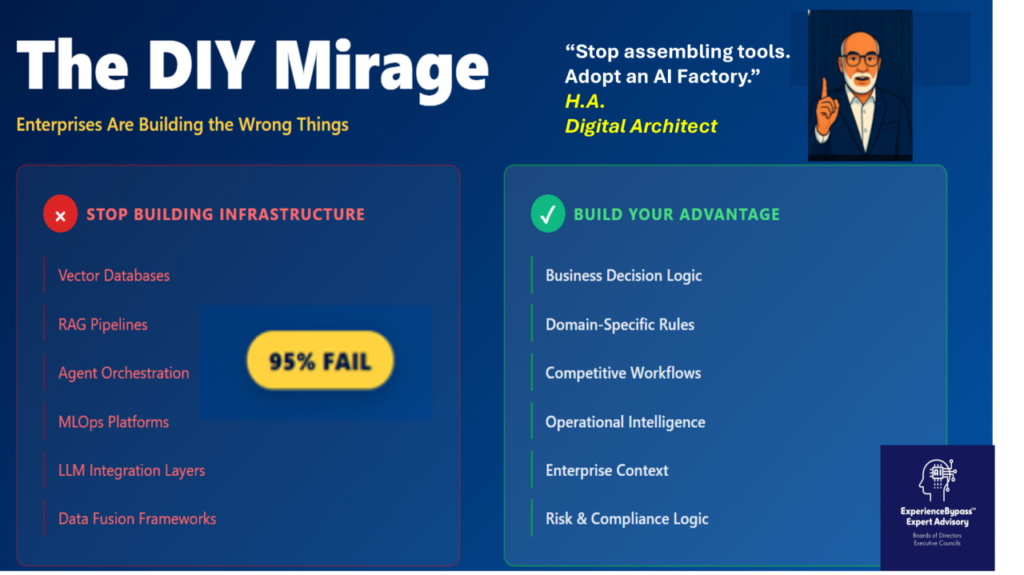

1. The New DIY Confusion: Building the Wrong Things

AI feels accessible. Cloud providers offer vector search, embeddings, data services, and orchestration primitives. Open source tools provide RAG patterns and model interfaces. Large language models offer promising shortcuts. These ingredients create a sense that enterprises can assemble their own AI platform.

But assembling tools is not the same as building a platform, and none of them provide an effective data integration effective solution

There are two categories of work in enterprise AI:

A. DIY that creates competitive differentiation

This includes:

– encoding domain specific decision logic

– adapting workflows to operational realities

– integrating AI into frontline processes

– building or tuning reasoning and planning agents

– customizing intelligence for unique business needs

– aligning solutions with enterprise data and context

This is where enterprises win.

B. DIY that destroys value

This includes trying to build:

– data integration and semantic fusion frameworks

– vector search infrastructure

– RAG pipelines and memory layers

– agent orchestration runtimes

– workflow engines and state management

– MLOps and model lifecycle automation

– LLM integration layers

– observability, governance, and security substrate

This work does not differentiate the business.

It drains talent, delays progress, and produces brittle systems that cannot scale.

2. Why Enterprises Fall Into This Pattern: The Illusion of Accessibility

The early stages of a technological wave always create the same illusion. Leaders see tools that appear to lower the barriers to entry. Teams build early prototypes that seem promising. Cloud services appear to reduce complexity.

The result is a false sense of capability.

History shows that this pattern repeats across every major enterprise technology shift:

Custom ERP Systems in the 1990s

Organizations attempted to build their own ERP integrations with a best of breed approach and workflow systems, believing their operations were too unique for across the board packaged solutions. Most projects suffered delays, cost overruns, and eventual abandonment. SAP and Oracle replaced those internal builds.

Custom Data Warehouses in the 2000s

Enterprises built bespoke ETL pipelines, reporting layers, and analytics stacks. These systems became fragile, costly, and difficult to maintain. Snowflake, BigQuery, and Databricks replaced them.

DIY Internal Clouds in the 2010s

Many organizations tried to create internal cloud platforms to avoid vendor dependency. Progress was slow, expensive, and unsustainable. AWS, Azure, and Google Cloud replaced them.

The pattern is unmistakable.

When enterprises build foundational platforms that others can amortize across thousands of customers, the internal build loses every time.

AI has been for several years stock in this same DIY cycle.

3. Why DIY of the AI Stack Fails: Complexity, Cost, and Volatility

The new AI stack is fundamentally different from past enterprise systems. It is far more dynamic, more complex, and more intertwined with real time operational processes.

Building even a fraction of the modern AI-native infrastructure requires solving problems in:

– multi modal data ingestion and fusion

– data virtualization without concern for the integrity of the operational environments

– semantic search and vector retrieval

– reasoning and planning orchestration

– RAG and memory pipelines

– identity, security, and access layers

– workflow engines and state machines

– MLOps, versioning, and lifecycle control

– monitoring, drift detection, and auditing

– model abstraction and replaceability

– deterministic control over probabilistic models

Most enterprises are not structured to excel at building, evolving, and supporting this type of platform. It requires sustained investment, highly specialized engineering, and deep operational experience.

The platform layer should be adopted, not built.

The enterprise should shift its effort to the competitive logic layer above it.

4. The Work Enterprises Should Own: The Competitive Logic Layer

Competitive advantage does not come from the platform.

It comes from how the business uses the platform.

This logic layer contains the elements that no vendor can package:

– the way the enterprise makes decisions

– the unique structure of workflows

– the sequencing of operational tasks

– the integration of domain expertise

– the behavior of frontline teams

– the use of proprietary data

– the organization’s risk posture

– the nuances of processes and exceptions

This layer is where enterprises should apply their best talent.

This is the layer where DIY drives value.

It does not always require building an application.

It may involve configuring an existing module, extending a workflow, defining rules, building an agent, or shaping how intelligence flows through an operation.

What matters is that this logic expresses the unique way the business competes.

5. The Operating Model Shift: How the CLEARED AI Framework™ Enables Differentiation

The CLEARED AI Framework™ provides the design approach for creating the competitive logic layer without reconstructing the platform itself. It helps organizations:

– Clarify business outcomes

– Leverage the right data sources

– Engineer reusable building blocks

– Architect AI enabled workflows

– Reduce delay between insight and action

– Enable business teams with the tools they need

– Deliver consistent, measurable enterprise value

The framework is designed for extensibility across pre existing technology and modern AI stacks. It guides enterprises in directing DIY effort toward the last mile, not the foundational layers.

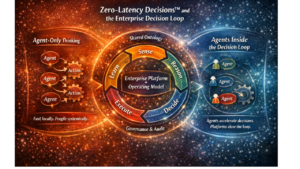

6. The AI Enabled Enterprise Index™: Zero Latency as the Highest Maturity State

The AI Enabled Enterprise Index™ describes the progression from experimentation to full scale transformation:

Level 1 – Experiments

Level 2 – Fragmented production

Level 3 – Strategic portfolio

Level 4 – Factory enabled delivery

Level 5 – Zero Latency Enterprise™

Zero-Latency Enterprise™ is the nirvana of competitive advantage. It represents an organization where signals are translated into decisions and actions with minimal delay. It is a state where AI is embedded into operating rhythms, workflows adjust in near real time, and iterative learning occurs continuously across the enterprise.

Reaching this state requires two things:

1. an adopted, AI native platform capable of real time, secure, consistent operation

2. an enterprise that has invested in the competitive logic layer built on top of it

7. Conclusion: Adopt the Platform. Build the Competitive Logic. Achieve Zero-Latency Decisions™.

Enterprises that attempt to build their own AI stack repeat the same mistakes that defined prior eras of custom ERP, custom data warehouses, and internal cloud platforms. The economics and complexity make these internal builds unsustainable.

The path to competitive advantage is not in rebuilding the underlying technology. It is in using it well.

The organization should adopt the AI platform layer, leverage all suitable components, and focus its talent on the competitive logic layer that expresses how the business operates. This logic layer, combined with a modern operating model, is what accelerates the enterprise toward Zero Latency performance.

#AIFactory, #AINativePlatform. #EnterpriseAI. #AIGovernance, #AIOperatingModel

#DigitalTransformation, #AIArchitecture, #ZeroLatencyEnterprise, #AIEnablement