Author’s Perspective

Honorio J. Padrón III

Global Transformation Leader & Enterprise AI Strategist Former CEO/CIO/CTO, Fortune 100 Advisor, NASA Program Contributor, VP LATAM for C3 AI, Creator of the CLEARED AI™ Framework, AI-ENABLED Enterprise Index™, and the Zero-Latency Decision Enterprise™ Model

For more than five decades, I have led enterprise-wide transformations across industries and continents, re-architecting operating models, designing decision-intelligence systems, integrating advanced automation, and advising organizations from the Fortune 100 to NASA. My work has consistently centered on a single objective: in the most effective manner, enabling large-scale enterprises to make faster, smarter, structurally aligned competitive decisions.

What informs this paper is not theory, but the accumulated pattern recognition of 50 years spent inside the world’s most complex operational environments: global supply chains, regulated financial systems, multi-plant manufacturing networks, mission-critical government programs, and industrial operations spanning dozens of countries. From these transformations, one truth has become unmistakable: enterprise-scale intelligence only emerges when architecture matures, not when tools proliferate.

One moment early in my career crystallized this principle. During a major digital transformation I was leading, a country manager confronted me and accused me of taking away his “flexibility.” My response was simple, and it has guided my approach ever since. I told him:

“You and your team are great musicians. Until now, you could stand in any corner, pull out a trumpet, and play it beautifully or tomorrow pick up a guitar instead. But today, you aren’t soloists. You are part of a symphony. You must play from the same sheet of music. And while that may feel restrictive, imagine the sound a full orchestra can produce compared to one person playing a trumpet in the corner.”

He paused, understood the enterprise perspective and the magnitude of the shift, and walked away without another word. That moment captured the essence of enterprise transformation: alignment is not constraint it is power.

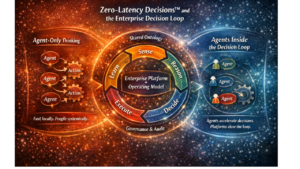

This understanding is essential to interpreting the structural shift now unfolding with AI. That is why this analysis focuses squarely on the world’s largest corporations. The Global 2000 represent roughly half to two-thirds of global enterprise AI spending. They carry the data gravity, compliance burdens, system interdependencies, and operational complexity that leave no room for fragmented, improvisational solutions. For these organizations, the move toward integrated AI factories is not optional, it is a structural inevitability dictated by economics, governance, security, and the accelerating need for Zero-Latency Decisions™.

This does not mean smaller AI product and services organizations have no future. Mid-market companies and focused operators will continue to benefit from targeted AI solutions and domain-specific automation. A new tier of AI-Factories-as-a-Service will emerge for them. Niche AI providers will remain viable in isolated operational islands where integration demands are minimal.

But the purpose of this paper is to describe the architectural reality facing the largest enterprises on earth. In the G2000, fragmentation is not simply inefficient, it is incompatible with how these organizations operate. Their path forward is defined: platform-level consolidation, governed intelligence, real-time orchestration, and the emergence of the AI-ENABLED™ enterprise as the new operating model of the global economy.

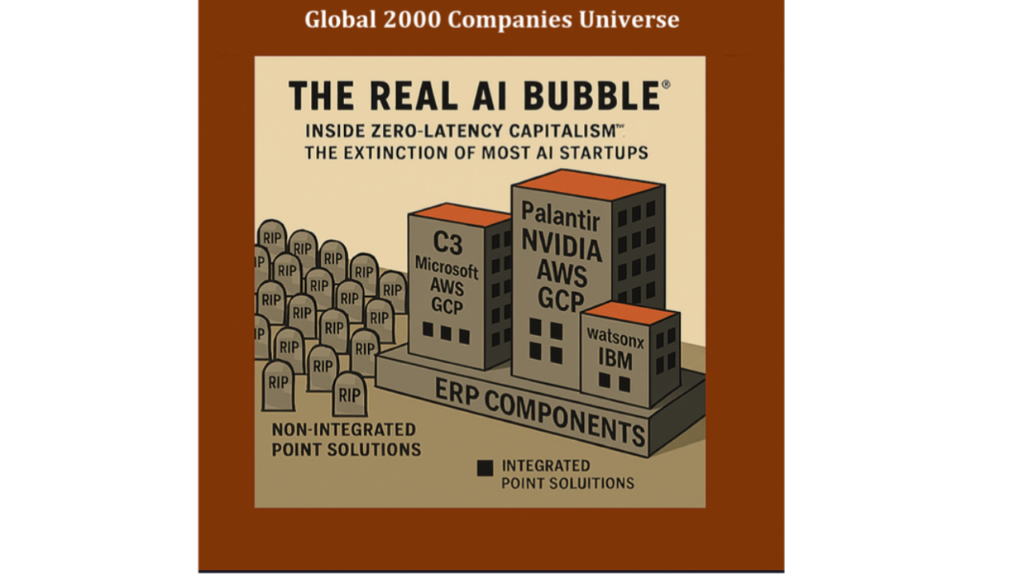

Introduction – The Bubble No One Sees

Talk of an “AI bubble” fills financial media. Valuations, IPOs, and venture funding dominate the conversation. But the true bubble isn’t financial, it’s structural. It consists of the thousands of narrow AI companies still being created during the initial experimental stage of enterprise AI adoption. According to Gartner’s 2024 Hype Cycle, generative AI has already entered the “Trough of Disillusionment”, a phase where high expectations collide with hard reality. Recent research reinforces this: a report from Massachusetts Institute of Technology (MIT) found that 95 % of enterprise generative-AI pilots fail to deliver measurable revenue impact. Too many organizations are still deploying point-solutions that consume vast processing power and budget but deliver little scaled enterprise value.

As organizations climb the AI-ENABLED™ Maturity Continuum, they are discovering that fragmented tools cannot deliver governed, scalable, or adaptive intelligence. The correction ahead will not come from market panic it will emerge from architecture maturity, as enterprises evolve their Operational Model into becoming truly AI-ENABLED™.

This phase mirrors what I described in my recent article on the return of the Solow Paradox, the phenomenon observed in the 1980s and 1990s when massive IT and ERP investments failed to yield corresponding productivity gains. The pattern is repeating: today’s enterprises are investing heavily in AI, yet seeing limited systemic benefit because most solutions remain disjointed and localized, not structurally integrated into the enterprise fabric.

As with ERP’s eventual consolidation, the inflection point will come when AI transitions from isolated experimentation to an end-to-end, platform-based operational layer. Only then will enterprises unlock measurable productivity and decision velocity.

From AI Experiments to Enterprise Architecture

Every technology wave starts with scattered experimentation. In AI, that meant every department buying its own tools, marketing used text generators, HR used screening bots, operations used forecasting models, Chat GPT experiments being implemented in contract and regulatory areas. The result was innovation without integration. History shows what happens next: departmental tools gave way to ERP systems that unified transactions, and the early Internet’s fragmentation consolidated into platforms like Azure, AWS, and Google. AI is now entering the same consolidation phase: from fragmented tools to enterprise-wide platforms.

The Platform Era: Building the AI Factory

The next decade belongs to AI-Native platforms, integrated environments that connect data, models, agents, LLMs, Orchestrators, and workflows across the enterprise. They act as AI factories, producing continuous learning and governed intelligence.

NVIDIA + Palantir: The AI Factory Alliance

In October 2025 NVIDIA and Palantir announced a major partnership to operationalize AI by turning enterprise data into dynamic decision intelligence. Palantir’s AIP integrates NVIDIA accelerated computing, GPU-optimized model libraries, and real time simulation capabilities, enabling enterprises to build and operate AI factories at scale.

Jensen Huang, NVIDIA CEO: “The next industrial revolution has begun. Companies and countries are partnering with NVIDIA to build AI factories that produce a new commodity, artificial intelligence.” Alex Karp, Palantir CEO: “We are proud to partner with NVIDIA to fuse our decision intelligence systems with the world’s most advanced AI infrastructure.”

Use Case Example One of the most visible examples is Lowe’s Companies Inc. Lowe’s used the combined NVIDIA, Palantir AI Factory architecture to build a full digital twin of its global supply chain. The model integrates thousands of vendors, hundreds of distribution centers and more than a thousand stores. This enables real time reoptimization of inventory flows, shipment routing, labor allocation and disruption response. This level of operational intelligence is only possible when accelerated computing and enterprise-wide data integration are fused into one AI factory layer.

C3 AI and Microsoft, The Enterprise Stack

C3 AI’s enterprise platform integrates data, the full model and agent lifecycle, workflow embedded LLM functionality, security, monitoring, orchestration and governance. Through its strategic alliance with Microsoft the C3 AI platform is natively available within Azure and the Azure AI services portfolio. This creates a unified environment for building enterprise scale AI applications with full operational control.

Thomas M. Siebel, C3 AI CEO: “C3 AI has pioneered Enterprise AI for over a decade, accelerated by collaboration with Microsoft to help iconic organizations solve the hardest business challenges of the twenty first century.” Judson Althoff, Microsoft EVP: “Together, C3 AI and Microsoft are enabling enterprises to use AI to transform their businesses and their sustainability goals.”

Use Case Examples Shell uses C3 AI and Microsoft Azure to operate one of the world’s largest predictive maintenance deployments. The system monitors tens of thousands of pieces of rotating equipment across global upstream and downstream operations. The solution reduces failures, lowers maintenance cost, improves production uptime and accelerates learning cycles across assets by unifying data into a single industrial AI environment.

At Dow the C3 AI and Azure architecture supports supply chain optimization and digital twin modeling across multiple production lines. The system identifies performance drift, predicts material constraints, reduces production losses and continuously optimizes throughput across complex manufacturing networks.

I was at C3 when this partnership was activated and personally witnessed how rapidly these systems scaled once the platform was integrated into Azure. The completeness, repeatability and operational impact of these deployments is precise proof of the AI Factory model in practice.

IBM watsonx: The Hybrid-Cloud Intelligence Layer

IBM’s watsonx platform now spans hybrid- and multi-cloud environments, tightly integrating data fabrics, model development and deployment, and enterprise governance.

“What differentiates IBM is the breadth of our AI offerings, with an innovative technology stack and consulting business at scale and our ‘client-zero’ lens.” Arvind Krishna, IBM CEO.

watsonx is beginning to deliver in verticals where depth and scale matter most:

In healthcare and life sciences, IBM notes that watsonx helps organizations “foster innovation and boost productivity across priority workflows,” combining trusted data, regulated environments and mission-critical systems.

Use Case Examples

A recent IBM case at KPJ Healthcare Berhad (Malaysia) uses watsonx for a 24/7 AI-powered patient-service chatbot covering 30 hospitals showing how IBM is embedding intelligence into clinical operations and service design.

On the data-and-governance front, IBM’s new watsonx.data and watsonx.governance modules enable customers to unify siloed enterprise data, run scalable workloads and meet major regulatory frameworks 40% better accuracy in unstructured data trials has been cited.

This is more than a technology refresh. It signals IBM’s shift from “AI experimentation” to industrial-grade intelligence, embedded, governed, outcome-focused and integrated into core enterprise systems.

The ERP/CRM Foundation: The Transaction Core

Referencing SAP, ORACLE, SALESFORCE as the dominant ERP/CRM, operating in more than 180 countries with over 440,000 customers, reports that its client base represents a majority of global commerce. This ERP/CRM layer, the transaction core, is mature, standardized, deeply embedded, and architecturally stable. It is the authoritative system of record for financials, operations, supply chain, HR, procurement and manufacturing execution across the global economy.

As AI matures, ERP/CRM systems will increasingly absorb localized use cases, especially those where the required data already lives inside the ERPCRM database or data lake. When the data, workflow context and governance rules are native to SAP, ORACLE, or SALESFORCE, many operational AI use cases can simply be executed inside the ERP/CRM layer itself. This includes forecasting, anomaly detection, compliance monitoring, workflow recommendations and rule-driven automation.

However, ERPCRM systems are not designed to orchestrate enterprise-wide intelligence, cross-functional optimization or real time decisioning across heterogeneous data sources. For those use cases, enterprises will move upstream into AI native platforms such as C3 AI, Palantir or watsonx, where models, agents, digital twins and simulation operate across all systems.

The strategic design question becomes: Which use cases remain in the ERP where the data originates, and which must escalate to the AI Factory where cross enterprise intelligence is created?

This is the architectural future of the AI-ENABLED™ Enterprise:

· Localized AI inside ERP when data gravity and workflow context are self-contained

· Enterprise AI inside the AI Factory when decisions must integrate data, models and signals from across the entire organization

Any AI system that cannot integrate into this two-layer structure will remain peripheral.

Robotics + Automation + OT + Digital Twins

The future of automation will be governed by Robotics Operations Architecture (ROA) the structured strategy for how robots, PLCs, DCS systems, sensors, OT infrastructure, and cyber-physical systems connect to enterprise intelligence through AI-native platforms. ROA is the execution layer of the AI-ENABLED™ Enterprise, where digital and physical operations converge.

ROA Unifies Three Historically Separate Domains

- Industrial Robotics • Fixed automation (ABB, Fanuc, KUKA) • Collaborative robots (UR, Yaskawa) • Autonomous mobile robots (AMRs) • Robotic process cells

- Industrial Automation — PLCs, DCS, SCADA, MES • Siemens, Rockwell, Schneider • Distributed control systems and logic sequencing • SCADA supervisory control and process monitoring • MES systems orchestrating production workflows

- Operational Technology (OT) Data Infrastructure • OPCUA brokers • Time-series historians (OSIsoft/AVEVA PI, Canary) • Sensor networks and edge gateways • Industrial communication protocols

Historically, these systems operated in siloed control stacks, interacting only through rigid logic or manually engineered integrations. ROA changes that entirely.

The Role of ROA in the AI-ENABLED™ Enterprise

1. Robots, PLCs, and OT systems become signal contributors

Every action, vibration, temperature reading, cycle count, and state change becomes real-time structured data feeding the AI Factory.

2. Digital Twins unify the entire operational context

The Digital Twin — the real-time, simulation-ready model of the enterprise should not live in:

- OEM control systems,

- proprietary robotic cells, or

- isolated PLC logic.

It must reside in the core of the AI Factory, where data from production, supply chain, quality, maintenance, and logistics are integrated into a single cognitive model.

3. AI Factories orchestrate actions, not just insights

This is the shift from “analytics” to closed-loop autonomous operations:

- Palantir AIP combines real-time OT data, simulation, and adaptive decision intelligence to orchestrate robotic and machine actions across factories.

- C3 AI integrates industrial time series, asset models, and production workflows into digital twin applications that generate recommendations or act directly via OPC, MQTT, or REST interfaces.

ROA is what enables the AI Factory to move beyond prediction into execution.

Why ROA Must Sit Inside AI-Native Platforms (Not OEM Stacks)

1. Robots and PLCs cannot see the whole enterprise

OEM control systems only see local signals. They do not see:

- supply chain variability

- maintenance risk from other assets

- forecasted demand

- energy price swings

- staffing constraints

- logistics bottlenecks

Only the AI Factory has this view.

2. OEM ecosystems are proprietary and closed

Robotics vendors and PLC providers are not designed to host cross-functional intelligence. They are designed for deterministic control, not enterprise-wide optimization.

3. AI-enabled autonomy requires data and model orchestration across systems

Autonomous factories are not achieved by “smart robots.” They are achieved by smart enterprises:

- multi-asset learning

- multi-plant optimization

- cross-line scheduling

- enterprise maintenance strategies

- energy + throughput balancing

This requires the AI Factory as the global orchestrator.

C3 AI and Palantir AIP Already Operate as ROA Orchestrators

C3 AI

- Ingests OT data from historians (PI, Wonderware, Ignition)

- Connects to PLC/SCADA layers

- Embeds industrial digital twins

- Executes predictive maintenance, process optimization, and asset-level autonomy

Palantir AIP

- Connects OT data through Foundry Ontology

- Builds real-time digital twins and simulations

- Supports autonomous decision workflows

- Controls robotic and automation systems through secure API and edge actions

These platforms represent the first real examples of enterprise-level ROA orchestration: robots + automation + OT + digital twin + enterprise intelligence in one architectural loop.

The Future of ROA: Autonomous Industrial Operations

ROA is the blueprint for an enterprise where:

- robots reconfigure themselves based on forecasted demand

- PLC logic adjusts based on supply chain constraints

- production lines rebalance in real time

- maintenance actions are automatically sequenced

- energy optimization and throughput coexist dynamically

- digital twins simulate and correct actions before execution

This is the destination of the AI-ENABLED™ Enterprise: A single cognitive system directing both digital and physical operations through Zero-Latency Decision™ Enterprise.

Case Example on the Challenge of a Best of Breed Narrowly Scoped Point Solution – Augury in Context

Before implementing C3 AI at Pantaleon Sugar Holdings, we evaluated Augury’s capabilities and quickly decided against them due to the singular and isolated nature of their solution set. Even at that time, we had already conceptualized the need for an integrated enterprise platform capable of supporting the broad portfolio of use cases we had identified. A standalone vibration-analysis tool, no matter how sophisticated, could not support the architectural direction we were designing.

Our decision was to move forward with C3 AI as the platform, including their integrated Predictive Maintenance application. Yes, we sacrificed some of Augury’s world-class sensor-driven features, but we did so in favor of a unified, enterprise-grade architecture, one where preventive maintenance is not a specialty tool, but a native module inside a broader, scalable, AI-enabled infrastructure.

Augury’s sensor-based machine-health platform has clear value within the narrow domain of predictive maintenance, but as enterprises adopt C3 AI, Palantir AIP, and IBM watsonx, preventive-maintenance intelligence becomes just one module inside a far broader operational architecture. In these ecosystems, machine health is not an app — it is one of many signals feeding a unified digital twin and the ROA (Robotics Operations Architecture) execution layer.

To remain viable long-term, Augury must integrate its insights into enterprise-wide digital twins, OT data fabrics, and ROA orchestration environments. If it embeds within those ecosystems, it can become a valuable signal provider inside the AI-ENABLED™ Enterprise. But if it remains standalone, it will eventually be replaced by an integrated, platform-native option perhaps not as sophisticated in isolation, but vastly more valuable because integrated intelligence almost always outcompetes best-of-breed point functionality.

Horizontal Utility Orchestration and ROA: How Hyperscalers and Automation Fit Into the AI-ENABLED™ Enterprise

While enterprise AI Factories such as Palantir AIP, C3 AI, and IBM watsonx govern the cognitive fabric of the large intelligent enterprise, a different layer of orchestration has emerged beneath them horizontal utility integration, driven primarily by hyperscalers (AWS, Microsoft Azure, Google Cloud).

This horizontal layer does not replace enterprise-level intelligence; rather, it provides the cloud-level execution fabric for agents, workflows, service calls, and data movement across distributed environments.

1. The Role of Horizontal Utility Orchestration (Hyperscalers)

Hyperscalers operate the “plumbing” layer of the enterprise AI stack. Their orchestration tools are designed to coordinate:

- cloud services

- LLM calls

- micro-automations

- service chaining

- event-driven workflows

- cross-service routing

- API-level integration

- data flow between cloud-native components

This orchestration is essential for operational scalability, but it does not provide the enterprise-wide decision governance or cross-functional optimization required for AI-ENABLED™ operations.

2. AWS as the Case Study: Utility-Level Agent Orchestration

AWS’s newly announced “utility-style agent orchestrator” is the clearest example.

AWS is enabling:

- call sequencing between Bedrock foundation models

- routing across Lambda, S3, DynamoDB, Kinesis, and SageMaker

- agent memory passing

- multi-step agent pipelines

- distributed agent execution

- integration with internal APIs and cloud-native microservices

What AWS is providing: Infrastructure-level orchestration for AI agents and workflows.

What AWS is not providing:

- domain ontologies

- enterprise-wide optimization

- digital twin management

- cross-system decision governance

- constraint reasoning across supply chain, manufacturing, finance

- multi-model, multi-agent decision engines

- deep integration with ERP, OT, MES, M&C systems

In short:

AWS orchestrates tasks. AI Factories orchestrate decisions.

And they operate at fundamentally different altitudes.

3. How Azure and Google Fit Into the Pattern

The same trend applies across hyperscalers:

Microsoft Azure

- Azure AI Studio orchestration

- Logic Apps for workflows

- Azure ML pipelines and agentic chaining

- Fabric for distributed data orchestration

Google Cloud

- Vertex AI agent orchestration

- Duet AI routing

- Cloud Run and Workflows

- Global service mesh integration

All three hyperscalers are moving aggressively into the same horizontal category:

“Agent and workflow utilities for cloud-native execution.”

But none of them operate as enterprise decision-intelligence platforms. That is the domain of AI Factories.

4. ROA: The Integration Layer Connecting Physical Automation to AI Factories

ROA (Robotics Operations Architecture) is the operational layer that unifies:

- robotics

- machinery control systems

- PLCs

- industrial automation

- IIoT sensor arrays

- SCADA systems

- historians

- MES systems

- OT cybersecurity layers

This is where the physical enterprise connects to the digital brain.

ROA ensures that:

- robots

- conveyors

- production lines

- automated warehouses

- distribution hubs

- industrial assets

- autonomous vehicles

…operate in alignment with enterprise-wide intelligence coming from the AI Factory.

Why ROA matters:

- It brings the physical world into the decision loop

- It allows AI Factories to optimize real-time execution

- It transforms digital twins into live operational systems

- It dissolves the boundary between IT and OT

- It creates the environment necessary for Zero-Latency™ Decisions

5. How Hyperscalers, AI Factories, and ROA Fit Together

A simplified but accurate architecture:

ERP: Transaction Core (SAP, Oracle, Salesforce)

- Owns data gravity and financial governance

Horizontal Utility Orchestration (AWS, Azure, Google)

- Manages cloud services, agent workflows, and distributed pipelines

- Provides infrastructure-level automation (“the plumbing”)

Enterprise AI Factories (Palantir AIP, C3 AI, IBM Watsonx)

- Governs enterprise-wide decision

- Manages ontologies, digital twins, optimization, constraints

- Hosts enterprise agents, models, and simulations intelligence

- Manages ontologies, digital twins, optimization, constraints

- Hosts enterprise agents, models, and simulations

ROA / Digital Twin Execution Layer

- Physical-automation, OT, robotics, historian data, PLCs

- Routes real-time signals into the AI Factory

- Executes decisions in the physical world

LLMs / Human Loop Collaboration Layer

- Interfaces for knowledge work, governance, compliance, documentation

Investor Lens: Where Strategic Power Resides – The AI-ENABLED™ Enterprise Index

In this environment, “API connectivity” is meaningless. An ever-growing number of API-connected point solutions quickly becomes financially and operationally unsustainable. The real signal for investors is no longer whether a company has AI, but whether it has a structural role inside an AI-ENABLED™ Enterprise, as measured by the AI-ENABLED™ Enterprise Index grounded in the CLEARED AI™ pillars.

The AI-ENABLED™ Enterprise Index does not rate features or demos; it evaluates where and how a company participates in the enterprise operating model defined by CLEARED AI™. It asks:

- Does this company sit in the critical pathways of data, decisions, and execution?

- Or is it a peripheral tool that will be absorbed into ERP, into the AI Factory, or simply SAP, Oracle, Salesforce as consolidation progresses?

Within that CLEARED AI™ context, investors should interpret the following criteria as the core structural tests:

- Architectural Leverage – Value migrates to the layers closest to data gravity (ERP, M&C systems) and decision orchestration (AI Factory). If a company operates far from these gravitational centers, its leverage and therefore its long-term value trends toward zero.

- Proprietary Flywheel – Companies with true learning loops and feedback mechanisms that improve models and outcomes over time build durable moats. Those without a flywheel are simply providing one more model that the platforms can replicate and out-scale.

- Platform Synergy – Enhancers of major platforms survive; dependents and duplicates do not. A company that clearly extends the capabilities or economics of ecosystems such as Palantir/NVIDIA, C3 AI/Microsoft, or IBM watsonx has a path to persistence. One that competes with native platform functions does not.

- Domain and Data Control – Owning, generating, or governing unique operational data is a decisive advantage. Without domain-specific data gravity, an AI solution is interchangeable and will eventually be displaced by platform-native offerings that see more data, more often.

- Economic Control Points – Margins concentrate in the control layers of the architecture: ERP, AI Factories, M&C systems, and ROA/Digital Twin layers. Companies anchored in these control points can sustain premium economics; those operating in the “thin middleware” of point functionality will experience margin compression and eventual consolidation or extinction.

In other words, the AI-ENABLED™ Enterprise Index, built on CLEARED AI™, gives investors a structural lens: Does this company sit in a place where value accumulates, or in a place where value will be arbitraged away as the architecture solidifies?

Should I invest in a startup or company that is not clearly in the AI Factory spectrum?

A second, sharper question now confronts investors:

Here, the CLEARED AI™-based AI-ENABLED™ Enterprise Index is just as critical. The burden of proof is much higher for companies outside the dominant AI platforms.

If a company is not clearly aligned with, or integrated into, one of the emerging AI Factory ecosystems (Palantir/NVIDIA, C3 AI/Microsoft, IBM watsonx), investors should only proceed when all of the following are true:

- The company scores high on Architectural Leverage, meaning it anchors itself in critical enterprise workflows, not at the edges.

- It demonstrates a genuine Proprietary Flywheel, where each deployment, user, or transaction improves the intelligence of the system in ways the platforms cannot trivially replicate.

- Its Platform Synergy story is credible: it is obviously a complement, not a competitor, to the AI Factories and ERP providers that will own the core.

- It has clear Domain and Data Control, holding assets that would be strategically painful for an AI Factory or ERP platform not to access.

- It touches one or more Economic Control Points not just “nice to have” analytics, but levers that move cost, risk, throughput, or margin in a measurable way.

There will always be a narrow class of specialized, island use cases that can remain standalone small pockets where the data, workflows, and impact are so localized that they never need to interact with the rest of the enterprise. But these are the exception, not the rule.

For everything else, the investor question is binary and unforgiving:

Is this company architecturally positioned to become part of an AI-ENABLED™ Enterprise, or is it just another point solution waiting to be consolidated away?

That is the lens the CLEARED AI™ framework and the AI-ENABLED™ Enterprise Index are designed to provide.

The Shrinking White Space: Why Point Solutions Cannot Survive: The Structural Proof

1. AI Factories absorb the most valuable use cases

AI-native platforms (Palantir, NVIDIA, C3 AI, Microsoft, IBM) already include:

- Predictive maintenance

- Supply chain optimization

- Fraud detection

- Customer intelligence

- Demand forecasting

- Agentic workflow automation

- Asset-level digital twins

- Large-scale operational decision engines

Any company offering a narrow version of these capabilities is competing with the platform itself, which has:

- more data

- better integration

- faster compute

- enterprise security

- and lower marginal cost

This is not a “vendor battle” it is platform gravity.

Example: Palantir’s AIP already includes production-grade forecasting, routing optimization, asset monitoring, automated reporting, cyber/OT intelligence, and planning. A point-solution that offers “AI forecasting” or “maintenance optimization” is instantly redundant when the platform does it natively.

2. ERP systems will reclaim simple, localized AI

Use cases where the data already lives inside SAP, ORACLE, or SALESFORCE will be absorbed into the ERP modules themselves:

- invoice matching

- supply planning

- HR screening

- reorder-point prediction

- production schedule suggestions

SAP, ORACLE, SALESFORCE is already embedding AI copilots and micro-models in modules like IBP, Ariba, SuccessFactors, EWM, and S/4HANA Manufacturing.

Example: SAP Joule AI Copilot is already integrating anomaly detection, demand prediction, supplier risk scoring, and workflow automation inside standard SAP transactions.

Anything local to ERP → rendered obsolete as a standalone vendor.

3. M&C + ROA + Digital Twins eliminate “single-signal” companies

Companies that built models around:

- vibration analysis

- temperature monitoring

- line-speed optimization

- sensor-clamp anomaly detection

- routing heuristics

- vision-based quality inspection

are being displaced because AI Factories and Digital Twin infrastructures create multi-signal, multi-model intelligence.

Example: A company narrowly focused on preventive maintenance faces the unavoidable reality that:

- Palantir AIP includes multi-sensor maintenance models

- C3 AI includes full predictive maintenance and asset health suites

- Azure includes industrial MLOps and sensor-model pipelines

- AWS IoT TwinMaker provides a digital twin environment that absorbs individual analytics

Point solutions cannot compete with platforms that integrate every sensor, every plant, and every system.

4. The cost structure and management overhead makes point solutions unsustainable

Running isolated AI workloads is expensive:

- separate ingestion

- separate normalization

- separate storage

- separate governance

- separate MLOps

- separate compute clusters

When enterprises unify everything into the AI Factory, these duplicative costs Point solutions simply cannot match the efficiency of consolidated architecture.

Example: Recent MIT research shows 95% of enterprise AI pilots do not scale, largely because of integration, governance, and compute cost barriers. (point solutions fail precisely where platforms succeed.)

5. Enterprises do not want dozens of vendors — they want one operating model

AI at enterprise scale is not a tools problem, it is an operating model problem. Enterprises want:

- one ontology

- one digital twin

- one governance layer

- one AI/ML lifecycle

- one security boundary

- one decision engine

This is why the investment is consolidating into:

- Palantir + NVIDIA

- C3 AI + Microsoft

- IBM watsonx

The architectures are becoming complete.

There are areas and Use Cases that will survive in isolation.

It is correct to recognize that there will always remain a limited class of specialized, localized AI applications, functional islands whose data, workflow, and business impact are so self-contained that they do not require or influence enterprise-wide orchestration. These include highly specialized scientific, imaging, geological, or device-localized systems that operate independently of the AI Factory.”

Examples of Valid “Island” AI Use Cases (High-Quality + Executive-Friendly)

1. Specialized R&D Lab Instrumentation Models (Pharma & Materials Science)

A pharmaceutical discovery team may use an AI model that optimizes NMR signal interpretation, protein-folding visualization, or molecular docking heuristics.

- The data is lab-specific.

- The tool does not interact with ERP, supply chain, or operations.

- It does not require enterprise orchestration.

This can remain standalone without being absorbed by an AI Factory.

2. Geological Subsurface Modeling in Oil & Gas (Narrow Domain Experts Only)

Super-specialized seismic-processing algorithms that interpret micro-seismic events for a single basin or formation.

- These tools are not used outside the exploration group.

- They do not impact enterprise-wide processes.

- The underlying data is too domain-specific for broader reuse.

These remain functional islands.

3. Medical Imaging Algorithms inside Hospitals (Radiology-only Workflows)

AI that enhances MRI image resolution, detects microcalcifications, or automates segmentation for a single imaging device.

- This is “device-localized” intelligence.

- It does not drive enterprise decisions across departments.

- The data flow is strictly contained.

Such a use case does not require an AI Factory.

4. Retail Store Traffic Heat-Mapping for a Single Flagship Store

A flagship store might deploy a localized computer-vision heat map model for shelf redesign.

- It doesn’t need integration with supply chain, pricing, or CRM.

- It is a one-off environment.

- The insights are not scaled globally.

This is a true point-solution “island” with no architectural gravity.

5. Autonomous Drone Analytics for Specific Environmental Monitoring

A mining site may use drones with embedded AI to analyze tailing dam integrity or soil displacement.

- This is hyper-localized.

- It does not influence enterprise-wide processes.

- Data is spatially and contextually isolated.

It can exist outside the AI Factory without architectural conflict.

Why These Survive (Strategic Explanation)

These “island” solutions survive because they meet three criteria:

1. Low Coupling

They do not need ERP, CRM, supply chain, or finance integration.

2. No Impact on Cross-Enterprise Optimization

They do not influence or require Zero-Latency™ Decisions across multiple functions.

3. Specialized Talent + Specialized Data

Their domain is too narrow to justify platformization

Investor Truth: There Is No White Space Left

Given this architecture, investors must ask:

Can this company logically exist anywhere in the intelligent enterprise stack?

If the answer is:

- not in ERP

- not in the AI Factory

- not in ROA

- not in the digital twin

- not as a data source with proprietary control

Then the company has no structural position and will not survive the consolidation phase.

Conclusion – The Age of the Intelligent Enterprise

In this environment, the white space for independent AI point solutions is disappearing rapidly. Unless a company is already integrated into an AI Factory or clearly moving toward that architectural position, it faces an accelerating risk of obsolescence. The strategic question investors must now ask is clear: Can the company evolve into or be absorbed by an AI Factory inside one of the dominant enterprise ecosystems? Those that can align structurally will survive and scale; those that cannot will be left outside the intelligent enterprise economy.

The coming correction in AI will not signal collapse. It will signal completion. When ERP systems record every transaction, AI factories govern decisions, digital twins simulate operations, and robots act in real time, the enterprise becomes a single intelligent organism capable of Zero-Latency Decisions™. This is not an AI bubble bursting it is the architecture solidifying for the G2000 companies. The AI-ENABLED Enterprise™ is the operating model of the modern economy.

Let me know what you think, please contact me at Honorio@ExperienceBypass.com

#AI #EnterpriseAI #AINative #DigitalTwins #Robotics #ERP #Azure #NVIDIA #Palantir #C3AI #IBM #WatsonX #AIFactory #ZeroLatency #AIEnabledEnterprise #FutureOfWork #Automation #SupplyChainAI #IndustrialAI #Investing #TechStrategy